|

http://bit.ly/2Kg7NIR

Study Shows High Ranking Websites Are Neglecting Accessibility via @MattGSouthern http://bit.ly/2MiaWu9  According to a recent study, the most neglected aspect of technical optimization is website accessibility for the visually impaired and blind. The study from Searchmetrics used Google Lighthouse to test the level of technical optimization of high-ranking websites. For the most part, the results of the study are as expected. High ranking websites tend to be fast, use the latest web technologies, and provide an adequate level of security. What stood out most was the fact that many highly-ranked sites on Google are not doing enough to make their pages accessible to people with disabilities. The average overall score for accessibility for sites that appear in the top 20 positions on Google was 66.6 out of 100. That’s the lowest score of the four website Lighthouse categories that were analyzed in the study. The Google Lighthouse accessibility score is based on how sites perform on tests that measure areas such as color contrast and whether images, buttons, and fields in online forms have been tagged with meaningful names and descriptions. Daniel Furch, Director of Marketing EMEA at Searchmetrics, speaks on these results:

For more information about Google Lighthouse ranking factors in 2019, download the full study here. SEO via Search Engine Journal http://bit.ly/1QNKwvh May 31, 2019 at 05:03PM

0 Comments

http://bit.ly/2W4RwIM

Google’s John Mueller Answers Question About Negative SEO Attack via @martinibuster http://bit.ly/2EORqj0  In a Webmaster Hangout, Google’s John Mueller answered a question from a web publisher who asked what to do about a suspected negative SEO attack. The web publisher asked if he should wait until he received a manual action from Google. Here is the question:

John Mueller’s answer reiterated that Google’s algorithm already ignores spammy links.

John Mueller then stated that the links were likely normal spam links. Normal spam links happen naturally all the time. This has been the case since as long as I can remember. I believe what’s happening is that spammers believe that linking to a high ranking site will fool Google into believing they’re an authority hub and overlook their spammy links. But of course, that does not work. An old SEO myth proposes that linking to a highly ranked site will help your site rank better. If you’re interested in the origin of that SEO myth, check out this research paper published in 1998 (yes, 1998, I told you it was an old myth!) Authoritative Sources in a Hyperlinked Environment (PDF) Here’s what John Mueller said:

Use the Disavow Tool if You’re WorriedGoogle’s Mueller then went on to recommend the use of the disavow tool as a way to calm your nerves if you are really worried about it. This is what John Mueller said:

Now, I have a feeling that some might try to make a big deal out of that last statement, “That’s not something our algorithms would try to judge for your website” and start reading Anyone who would make a big deal out of that last statement is taking it out of context to twist it to mean something else. John Mueller continued:

Google Can Handle Normal Spammy LinksJohn Mueller again brought the topic of negative SEO back to the idea that Google can handle normal spammy links.

Disavow Tool Only for Extreme CasesJohn Mueller ended by stating that the disavow tool is meant for extreme cases only. It is not a tool that should be widely used but rather the opposite, it should rarely be used. Here’s what he said:

Google Focuses on Positive Signals?Google has been ignoring links in one way or another since the very beginning. The idea is to remove as much irrelevant noise so that what you’re left with is a pure signal, the signals that matter. An example of this concept is how Google ignores “powered by” links in a footer. Another example is the way Google will ignore a sitewide link from one site to another site and simply count it was one link and not as thousands of links. These are examples of Google ignoring irrelevant links in order to get to the link signals that matter. Getting back to the topic of negative SEO and spammy links, Google’s algorithm ignores links that are outside of normal neighborhoods. If a site has great signals of quality, if it contains great content, and users love it, then Google can rank just as it is without the spammy links. What would be the point of punishing a site by ignoring all the positive signals it contains? The user loses and so does Google. Ignoring spammy links and judging a site by the positive qualities makes so much more sense. When this topic is viewed from that perspective, Google’s insistence to not worry about spammy links, including adult links, makes more sense. If you wish to read more about the topic of negative SEO, consider reading: Attacked by Negative SEO? Lost Rankings? Read This Watch the Webmaster Hangout here. Screenshots by Author, Modified by Author SEO via Search Engine Journal http://bit.ly/1QNKwvh May 31, 2019 at 04:08PM

http://bit.ly/2Z0x5yU

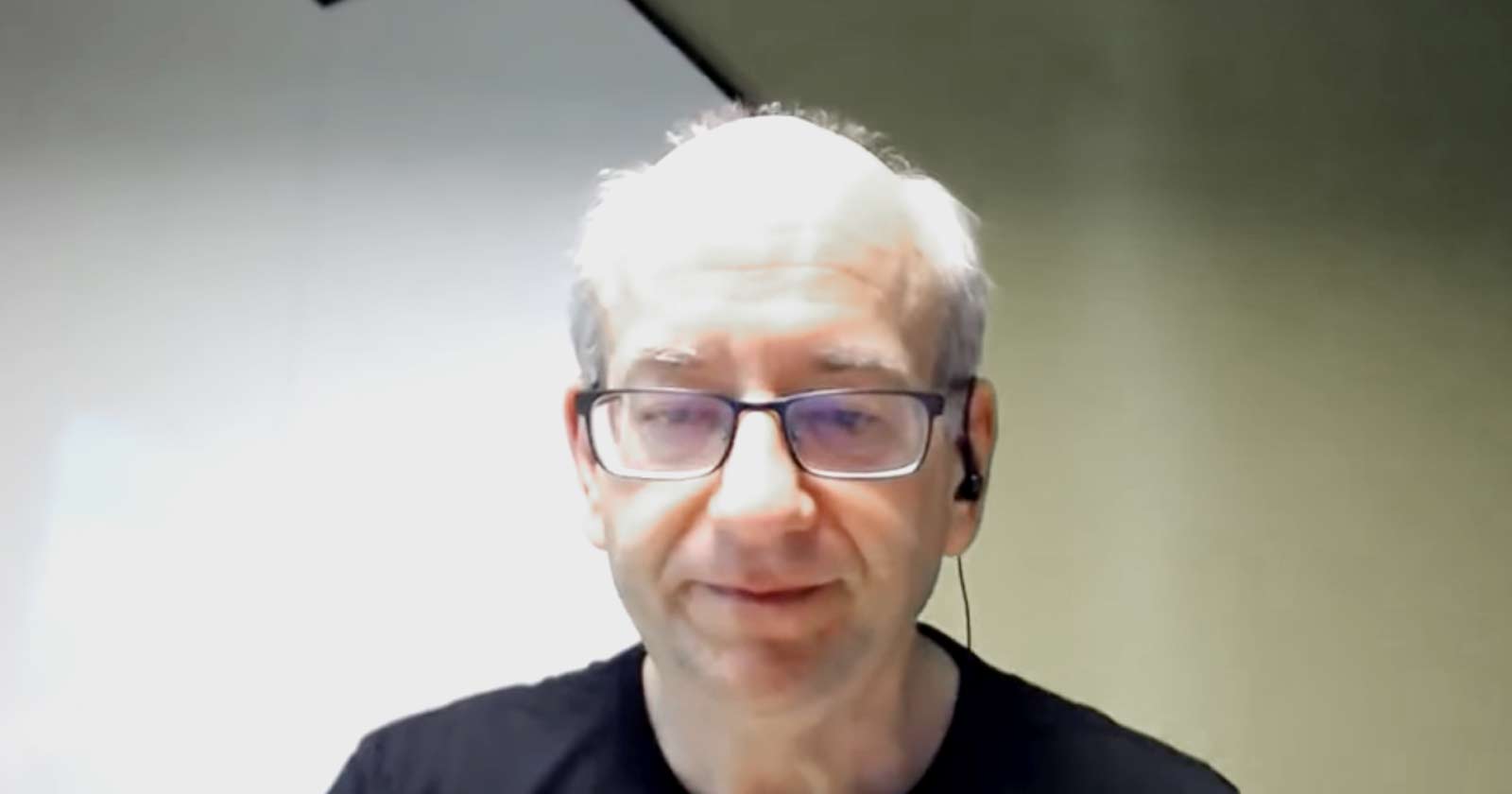

How to Make Money with SEO in 2019 - Whiteboard Friday http://bit.ly/2HRnBAl Posted by randfish Making money with SEO today is nowhere near the same practice it was in 2009. Sketchy, manipulative practices and simple, straightforward tweaks no longer do the job — to be successful in 2019, you need to be smart, strategic, and in tune with what searchers want. Rand Fishkin outlines three steps you need to have down if your goal is to improve your bottom line with the help of SEO.

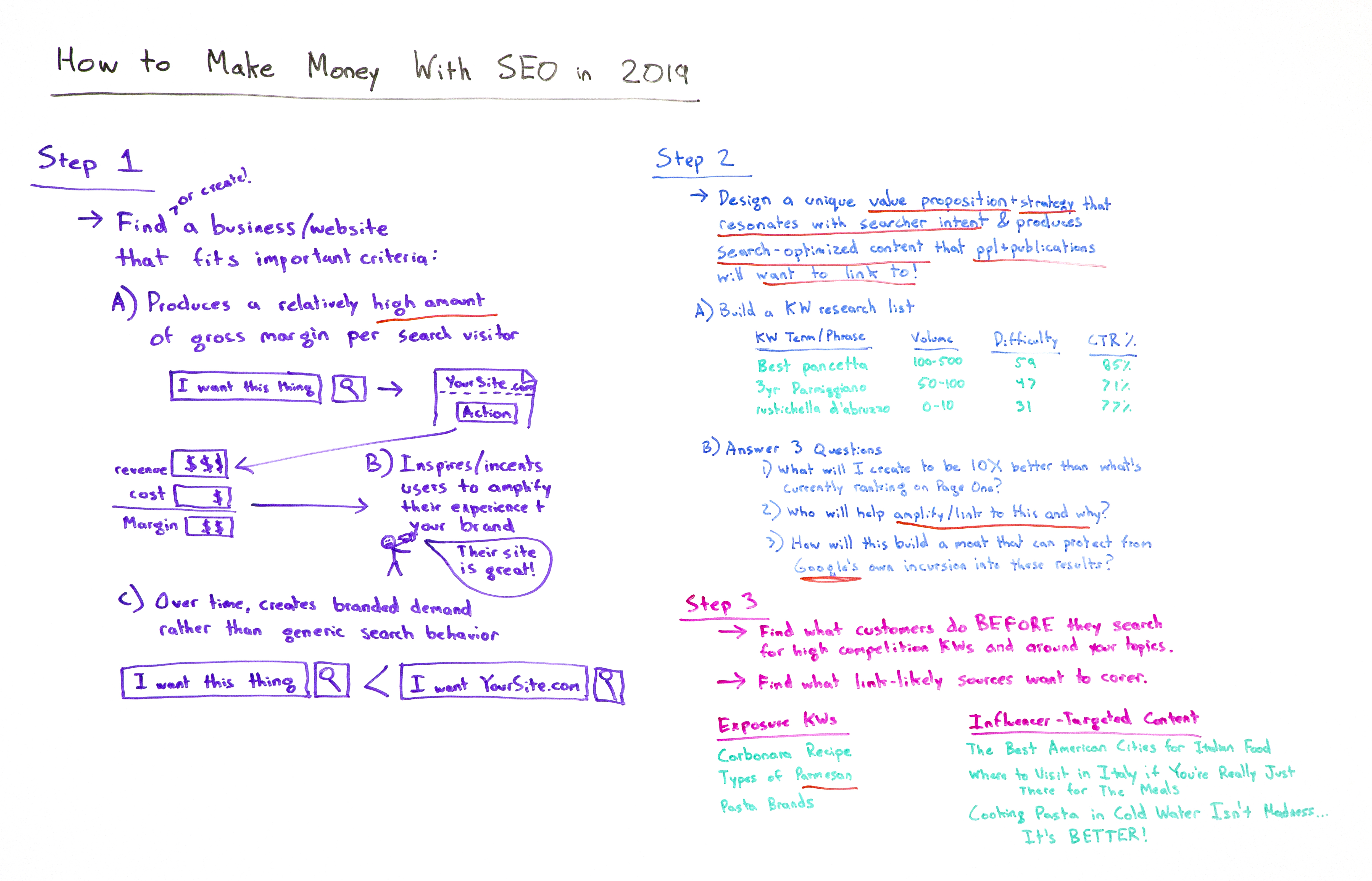

Video TranscriptionHowdy, Moz fans, and welcome to another edition of Whiteboard Friday. This week we are talking about how to make money with SEO. Now, for many of you who might be watching this video because perhaps you have googled or searched on YouTube about how to make money with SEO, well, what I want to do is talk about how that practice has changed dramatically in the last 10 years. In 2009, if you were searching for how to make money with SEO, there were a lot of sketchy and manipulative and actually relatively simplistic, straightforward things that you could do to make money online with SEO, and that has changed. That is not the case anymore, and I think this is why you see so many people who are in worlds like affiliate marketing and the worlds of creating small websites and many networks of small websites and trying to sell relatively simplistic, unbranded products or services or advertising revenue, that a lot of those sites have disappeared. Certainly part of that is because the margins on many of those products has gone way down. Some of it is big competition from many new entrants, including big companies like Amazon, but many others as well. A big part of this is the way that you think about making money online and how you might be able to use SEO to do that. Now if you are not one of those folks who's trying to do that and you are instead a professional marketer, I still think this video is going to be very valuable for you because there are a few key sources of change that have been brought to our industry by what Google has done and what websites have done and how users behave that shift a lot of this thinking. So stick with me. Step 1: Find (or create!) a business/website that fits important criteriaIf you want to make money online in the SEO world in 2019, your general step one is to either find and buy or create a new business or a website that fits some important criteria or to modify a website you've already got to fit these important criteria. A) Produces a relatively high amount of gross margin per search visitorThe first one is you want a relatively high amount of gross margin per search visit. This is fundamentally different from the past. In the past, I knew plenty of people who built their living in the SEO world with, "A visitor is worth a penny to me. A visitor is worth a hundredth of a penny to me, but it doesn't matter because I can make up for it in volume." But today, earning search visitors is so much more challenging than in the past, especially for a new website or an emerging one or a startup, that I believe you need this high gross margin to be able to do that. So you want to find people who are searching for a variety of things. I want this thing. Do a search. Come to your site. Take an action of some kind. That could be sign up for an email list. It could be view some advertising. It could be actually buy a physical product or buy a software product, whatever it is. You make revenue that is significantly more than the cost of serving that customer, the cost to you of maintaining the website, doing the marketing, your time and hours and whoever else you employ, keeping the lights on, paying the bills and the taxes, and the product itself, whatever you're shipping or whatever you're creating and serving, software, advertising, etc.

B.) Inspires/incentivizes users to amplify their experience and your brandThen it inspires and incentivizes users to amplify their experience and as a result your brand. You might say like, "Well, why do I need that? Why do I need someone who's going to go and share?" Not every visitor, but you need a certain percent of people to go and say, "Gosh, their site is great. I am going to post about it on my social media. I'm going to link to it. I'm going to talk to my friends about it. When people see this thing that I've made, I'm going to say, 'Oh, it came from such and such place.'" You need that because these types of online and offline word of mouth and amplification is core to a business' survival on the web, and that is fundamentally different than 10 years ago. Ten years ago you could do a lot of sketchy, spammy, manipulative stuff to earn links and to earn rankings. Google has removed almost all of that ability for 99% of websites, especially in the English language world. If you are operating in other languages, especially where Google's Web Spam Team has not done as well, there's still some more of those opportunities. But generally speaking, this is crucial. You need people who are going to link to you and amplify you. C.) Over time, creates branded demand rather than generic search behaviorYou need a business that fits the criteria of over time it creates branded demand rather than generic search behavior. Why? Because otherwise you do not create a competitive advantage that is sustainable with time, and other people who do will certainly recognize that and enter your field and compete with you and put you out of business.

"I want this thing" is a fine search phrase to target for your SEO. But you know what's way easier? "I want yoursite.com." When you have people searching for your brand and your branded products or the keywords that they were searching for generically plus your brand as a word in there, what they're saying is, "Google, don't serve me up just any result. Take me to that website." That is a competitive advantage, a barrier to entry that has huge amounts of protection for you as a business owner. Step 2: Design a unique value prop/strategy that resonates with searcher intent &produces search-optimized content that people want to link toAll right, step two. You've found a business that fits these criteria or you've created one or you've modified your business such that it does this. Great. Now you need to design a unique value prop and a strategy that does a couple of things. It's got to resonate with searcher intent, meaning you are serving what searchers actually want rather than just serving searchers with what you want them to do but that does not actually serve them. This is because Google has gotten too sophisticated about being able to match searcher intent with the keyword phrases and rank the sites that solve the searcher's problem. Ten years ago, that was not the case. Ten years ago, in 2009, someone could search for "best pasta," and you could serve them up a site that tried to sell them a certain kind of pasta as opposed to comparing a bunch of different brands and varieties and trying to truly serve the searcher's intent. That's almost impossible today. There are a few exceptions, but those gaps are closing rapidly. It also needs to produce search-optimized content that people and publications want to link to. Totally different from 2009, when you could manipulate the link graph, acquire links in ways that searchers didn't necessarily love, but Google would put you on top anyway and you could sort of take advantage of that for a while. Not the case. Now you need people to want to link to you, to have a reason to link to you. Otherwise, you will not be able to get those top ranking positions. A.) Build a keyword research list

So first, build a keyword research list. You can use Moz's Keyword Explorer, which is what I personally use. But there are many keyword research tools out there on the web. You can type in phrases. I love Italian food, so I'm using examples like that, so "best pancetta," "3-year aged parmigiano," "Rustichella d'Abruzzo," which is like this pasta variety that I personally think is the best one out there. There's search volume, there's difficulty, and there's click-through rate percentages. So I'm building this list. You can go check out the videos on keyword research if you want to dive deeper on this. Start your keyword research list B.) Answer 3 questionsBut essentially I want this list because I want to be able to answer some questions about the search phrases and terms that I'm targeting with my business. 1. What will I create to be 10X better than what's currently ranking on page one?First, what will I create to be 10 times better than what's already ranking on page one for these terms? If I search for "best pancetta" and I cannot come up with a way that I think I could outperform, have a better web page than what everyone else has got there, what's my competitive advantage? How am I going to take that over? You better come up with those things. I need those answers for the crucial terms and phrases that I'm going after, that are going to bring me the gross margin dollars that I need for my product, my services, my advertising, what have you. 2. Who will help amplify/link to this and why?Second, when I produce that content, who will help amplify or link to this and why? Who will help amplify this and why? If you don't have a great answer to that question, don't publish the piece. Wait until you do. Find that great answer, because you need that amplification in order to perform, especially in the earliest stages. Once you have lots of links, high domain authority, lots of visibility in Google, you can put a lot of things out there on the web and basically coast on your brand's strength and the fact that Google already likes your domain and is going to bias toward you. But in the early stages, when you have a new business, not the case. 3. How will I build a moat that can protect against Google's own incursion into these results?Third, how will I build a moat around this business that can protect from potential incursions by Google themselves? If you look at the search results today versus 2009, you will see a dramatic difference, which is that Google's results, from Google Maps to Google's own instant answers to their featured snippets to their tabs and systems where they try and answer a query fully with their own stuff, Google Travel, Google Flights, Google Hotels, the list goes on and on and on and on, they are taking away a lot of that opportunity, and you need to have a way to protect yourself from that. One of those ways is certainly branded search. Another way is to make sure that the words and phrases that you're going after, especially early on, are not ones where you have to compete with Google themselves. Step 3: Find what customers do before they search for high-competition keywords/around your topicsStep three, finally, find what customers do before they search for these high-value keywords to you, high competition keywords around your topics. What do they search for before they get to that? What do they search for around that stuff? How can I capture this customer prior to that money search? Then I can create a new keyword research list and a new set of content that I'm going to create to target those people, which will be vastly easier to capture them earlier in their buying cycle, earlier in their potential funnel. Exposure keywordsSo exposure keywords would be things like "carbonara recipe." Someone's going to search for carbonara and how to make it before they ever look up, "Now, where do I get pancetta?" This one potentially is easier to rank for than this one. This may be an imperfect example. But "types of parmesan" -- first off the English American spelling -- versus "3-year aged parmigiano," this is a transactional keyword. I know what I want. This is an "I'm still learning about this" thing. You're going to need content in both of those worlds. "Pasta brands," I'm learning. "Rustichella d'Abruzzo," I know what I want. Got to serve both. Influencer-targeted contentFinally, as part of step three, you want to find what link-likely sources are willing to cover. What is going to be the thing that gets you the amplification? Sometimes it's not the same thing as the exposure keywords or the money keywords. So you need content that is going to affect influential publications and people, things like, "Okay, we're going to produce a piece. It doesn't necessarily serve a lot of searchers, but we know we can get links to it. We know people will tweet about it. We know they'll post to their Facebook page. We know they might talk about it on Instagram." "The best American cities for Italian food," ooh, competition between American cities, whoever it is, whatever, Philadelphia, we put them low in the rankings. New York, we put them high in the rankings. They're going to fight about it relentlessly. Tons of people are going to talk about it. The "New York Post" is going to write about it. "The Philadelphia Inquirer" is going to be all pissed about it. Great. "Where to visit in Italy if you're really just there for the meals." Hmm, that's the kind of thing someone would cover. "Cooking pasta in cold water isn't madness. It's better." What? I actually do this, by the way. I do recommend starting pasta in cold water. We'll talk about that in another episode when I have my cooking set up here. But regardless, the idea behind this is that I have influencer and publication targeted content in addition to exposure keywords and money keywords. This sort of strategic thinking is how you can make money with a new business, a new website in 2019, and it is vastly different from what you saw 10 years ago. All right, everyone. I hope you've enjoyed this. Look forward to your comments. We'll see you again next week for another edition of Whiteboard Friday. Take care. Video transcription by Speechpad.com Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! SEO via SEOmoz Blog https://moz.com/blog May 31, 2019 at 03:54PM Daily Search Forum Recap: May 31, 2019 http://bit.ly/2HLuXoG Here is a recap of what happened in the search forums today, through the eyes of the Search Engine Roundtable and other search forums on the web. Search Engine Roundtable Stories:

Other Great Search Forum Threads:Search Engine Land Stories:Other Great Search Stories:Analytics Industry & Business Links & Promotion Building Local & Maps Mobile & Voice SEO PPC Search Features SEO via Search Engine Roundtable http://bit.ly/1sYxUD0 May 31, 2019 at 03:00PM Google Ads Restrictions Are Impacting Third-Party Tech Support Providers via @MattGSouthern5/31/2019

http://bit.ly/2MoS3pu

Google Ads Restrictions Are Impacting Third-Party Tech Support Providers via @MattGSouthern http://bit.ly/2Ifqbiy  After restricting ads for third-party tech support services to “legitimate” providers, Google hasn’t delivered on its promise of a verification program. As a result, third-party tech support providers are still not able to run ads. This issue was brought to my attention by a concerned reader who sent in some information regarding an article I wrote last September. The article covers changes to Google’s advertising policies which puts restrictions on who can run ads for third-party tech support services. At the time, Google promised a verification program would be rolling out in “the coming months,” which would allow third-party tech support providers to run ads again Bradley Penniment, the owner of a phone repair store in Australia, reached out to me saying:

According to the information he sent me, repair shops around the world are getting their ads disapproved. I looked into these claims, and sure enough, he was right. Third-party tech support providers are angryNine months have passed and there is still no verification program for third-party tech support providers. Needless to say, repair shops are angry, and some are saying it’s taking a toll on their livelihood. A quick search on Twitter will give you a glimpse into their frustrations:

The individual who emailed me claims that Google is being particularly harsh with third-party service providers who repair Google devices.

If anyone has had a similar experience I would sure be interested in hearing about it. Google updates its “Other restricted businesses” policyA policy update Google quietly rolled out last October may indicate that the company doesn’t intend to resolve this issue. Google’s other restricted businesses policy was updated to prohibit the promotion of technical support by third-party providers for consumer hardware or software products and services. Also included in that policy are ads for bail bond services, which are banned across the board. Does that mean ads for third-party tech support services are now banned whether the providers are considered legitimate or not? SEO via Search Engine Journal http://bit.ly/1QNKwvh May 31, 2019 at 02:04PM

http://bit.ly/2EMIz15

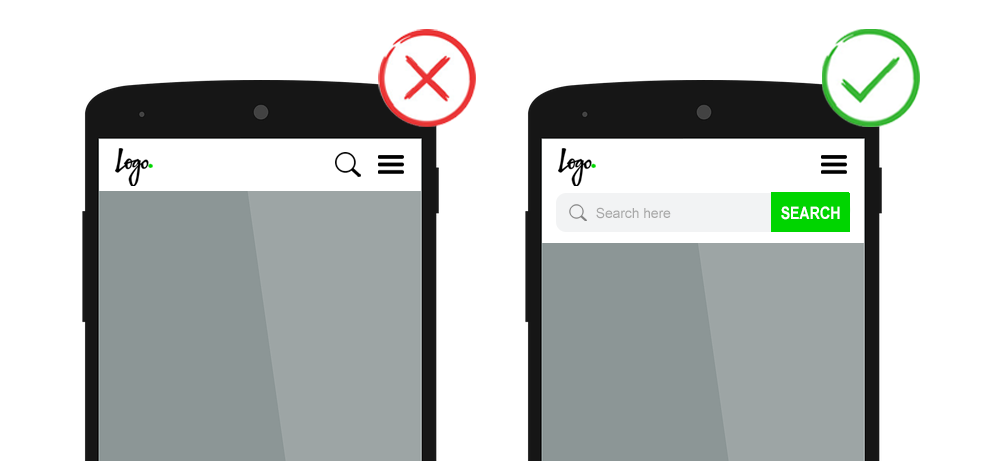

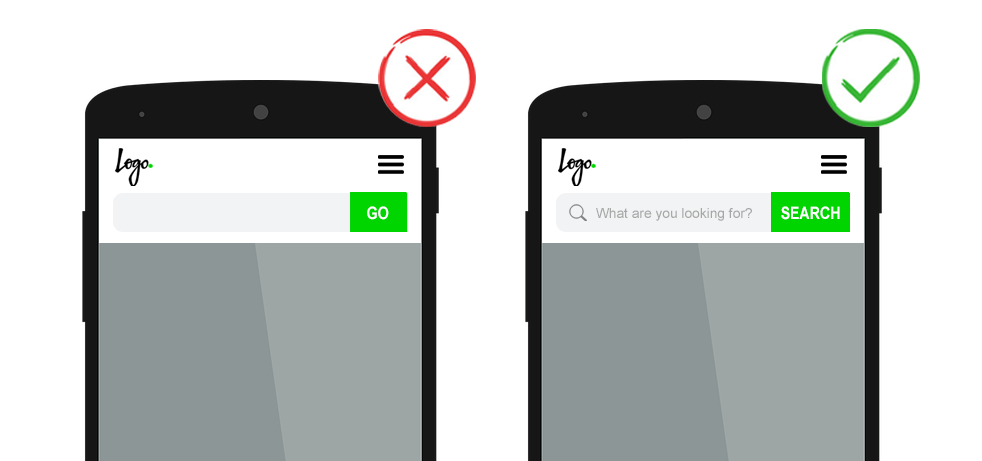

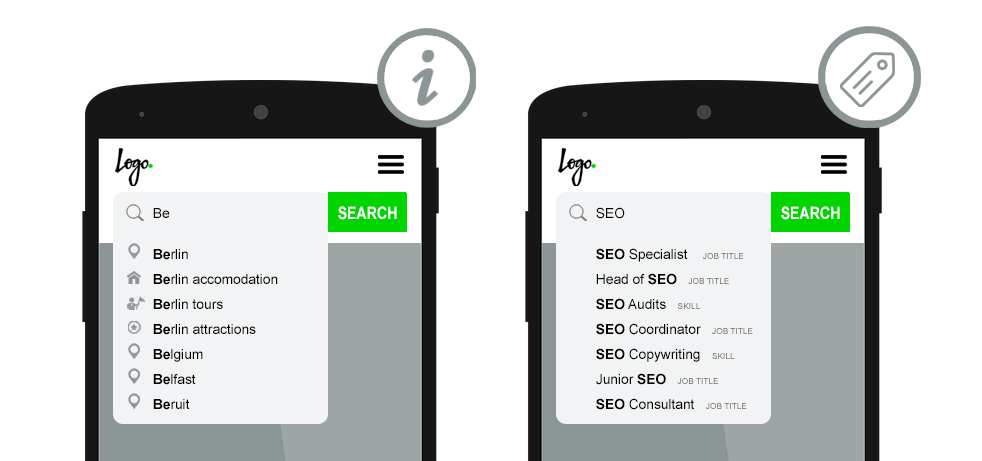

On-Site Search & SEO: Everything You Need to Know via @jes_scholz http://bit.ly/2MjrgLm Every SEO will at one point encounter the content paradox: the more quality content your site has, the more useful it is, but the harder it becomes to find that content. At a certain point, well-designed site navigation alone is not enough. Not every product, article, or other content pieces can have its own submenu. And not every visitor will happily spend time exploring via category led journey. Searchers are looking for a specific category or topic. Sometimes even an exact product or service. And they want to know within one click if you have it. These visitors will immediately look for a site search box (also known as internal site search functionality). Interacting with an optimized on-site search function is the digital equivalent of a passionate and helpful librarian. You say what you’re looking for (type in a phrase) and it speedily shows you relevant options (provides a list of result pages from the site). Sadly, many search boxes typify a librarian who sits disinterestedly behind the desk, giving a curt response of “over there” if you ask for information. It’s a choice what you employ at the entryway to your content. A valuable asset that can drive up organic sessions, sitewide conversions and enhance brand image. Or a barrier between visitors and their desired content. On-Site Search Tools You can get a site search box “out of the box” thanks to a variety of tools – from open source options to focussed software as a service, to enterprise site search solutions costing over six figures. Most content management systems and ecommerce platforms will have basic search functionality in-built, which can be further enhanced with plugins. And some companies choose to custom build their own. I won’t second guess which search application will best suit your website. I will give you a list of best practice functionality your chosen platform should accommodate. On-Site Search Box UsabilityWe’ve all been there. Thumbing on a mobile screen into a tiny search box that delivers no useful result. Sadly, there’s no one right user interface for a site search box. A/B testing different variations will show which one generates the most queries and conversions with your visitors. Having said that, there are a few best practices to abide by. Don’t Make Users Search for the Search Box The placement of your search bar will dictate it’s usage rate. Although there is no “best” spot for all websites, users typically look for the site search box in the top right or top middle on desktop and in an own line, the entire width of the screen, within the header on mobile. Don’t hide it in a drop-down or a hamburger menu. Don’t place it too near other boxes, such as newsletter sign up field. Don’t have it as nothing more than a small icon that must be clicked for the text field to expand. The input field should be big enough for most search terms. After all, it’s tough to correct errors or misspellings you can’t see. The reasonable width will vary from site to site, but as a guide, typical queries will fit into a 27 character long search box. Feel free to go longer. Beware going shorter. Position the search box on every relevant page in the same spot. I say relevant page because search boxes aren’t necessarily a global element. They don’t belong on checkout pages as this can distract from conversion. They may not belong in marketing campaign landing pages. They likely belong on 404 pages, but rather than in the header, front and center to help the lost user to their desired content. And then there’s the homepage. Should it have the standard search box placement? Or should it be featured above the fold? Answer the question – can your visitors convert faster with site search? If your website is geared towards discovery and exploration, or has a focussed goal like driving signups, the typical placement shared by the rest of the site should do just fine. If, on the other hand, your user knows what they are looking for, putting search in the hero image on the homepage can reduce the number of clicks to conversion. Have a Clear Call to Action A best in class search experience starts with the form itself. It should be immediately obvious what the search box field does. Often this is achieved by pairing a text prompt with a magnifying glass icon and/or a button labeled ‘Search’ or ‘Find’ – The label ‘Go’ has fallen out of favor under the presumption it isn’t as clear. Use placeholder text within the search box field to simultaneously affirm that yes, this is, in fact, a search bar, and to guide visitors about what they can search on your site. Encourage searches with:

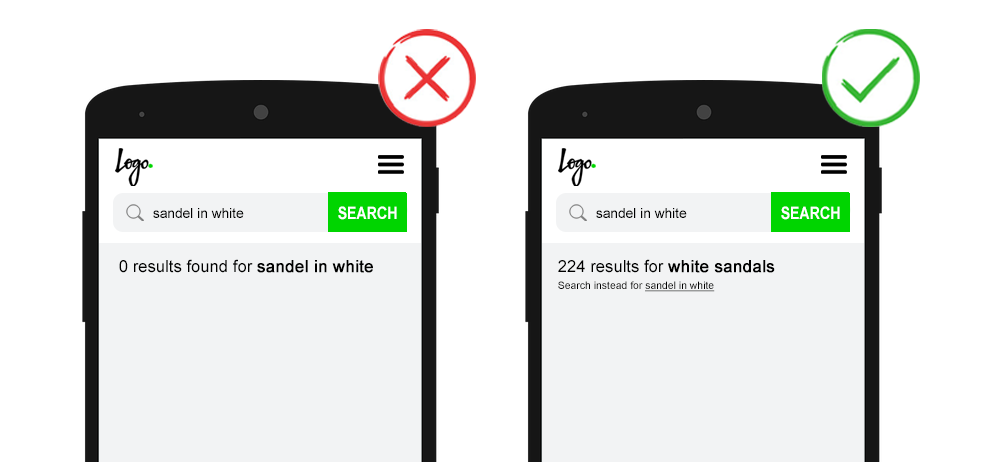

Just be sure the placeholder text clears when the user clicks in the search box. Finally, while some visitors prefer to click on a search button others will just hit Enter on their device when they’re done typing. Both of these actions should trigger the search function. Improve Imperfect Input Visitors won’t type your idea of a perfect keyword. Any search function must adapt not only to their terminology but also their inevitable failings. A user’s poor input doesn’t excuse a site’s poor output. On-site search must:

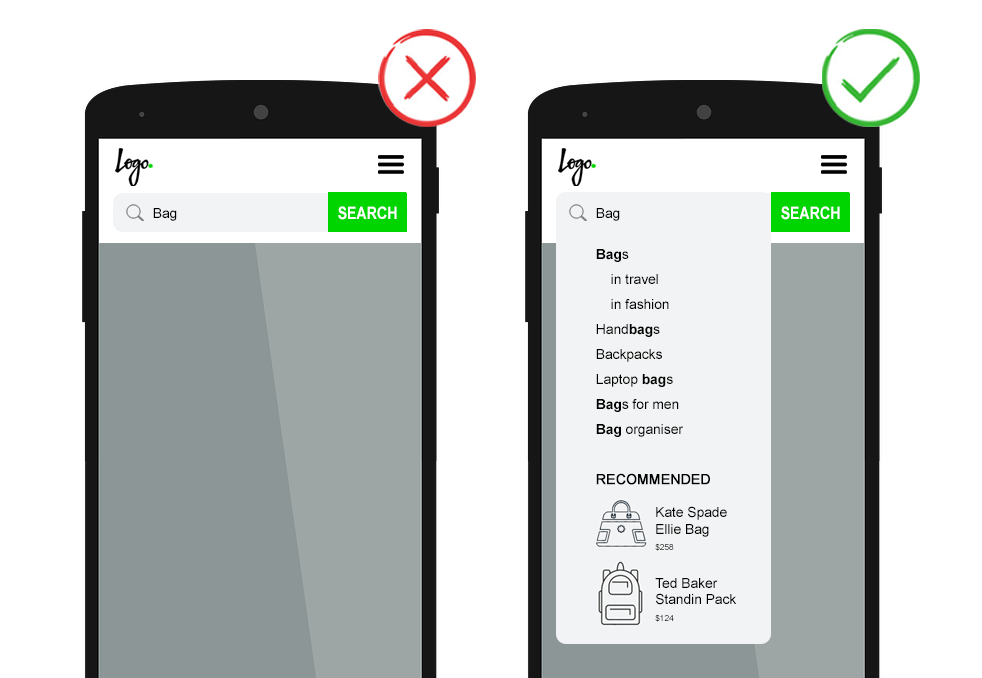

Whenever on-site search automatically corrects the search query, for example in the case of misspellings, show the corrected and original queries at the top of the page. Be sure to give a clear option to force a search with the original term. Also, decide how you will handle the submitting of an empty search (i.e., the user has clicked into the box, but not typed anything before triggering the search function). You could send them to an ‘all categories’ page. You could show an error message. You can simply not allow the form to submit. Returning an empty results page shouldn’t be the answer. Inspire Input with Predictive Search Predictive search engages visitors from the first characters they type by showing result suggestions. This:

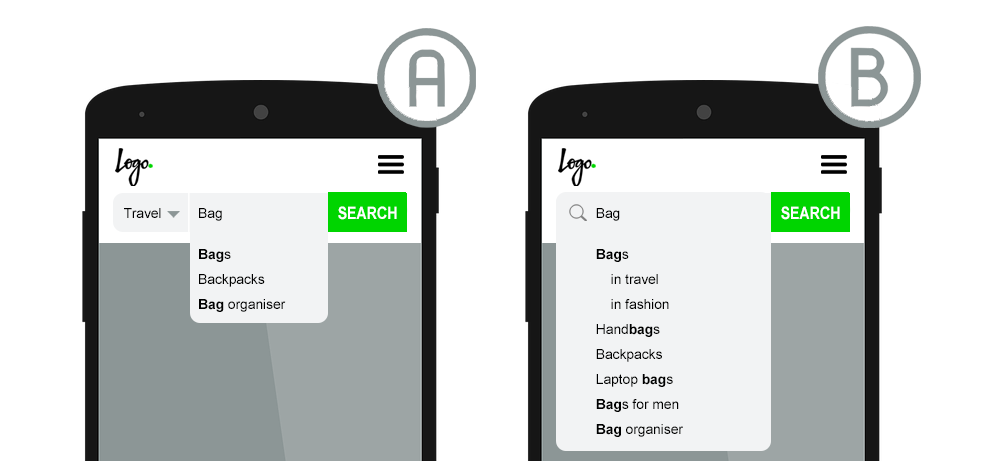

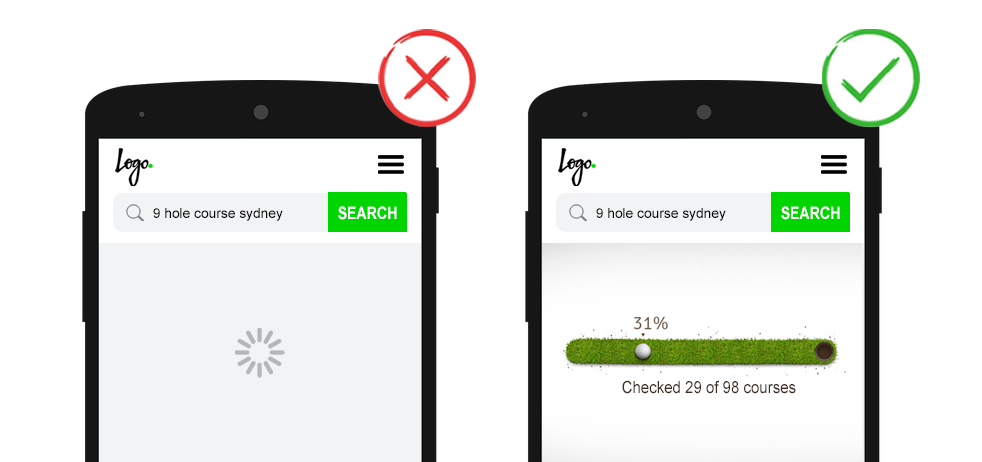

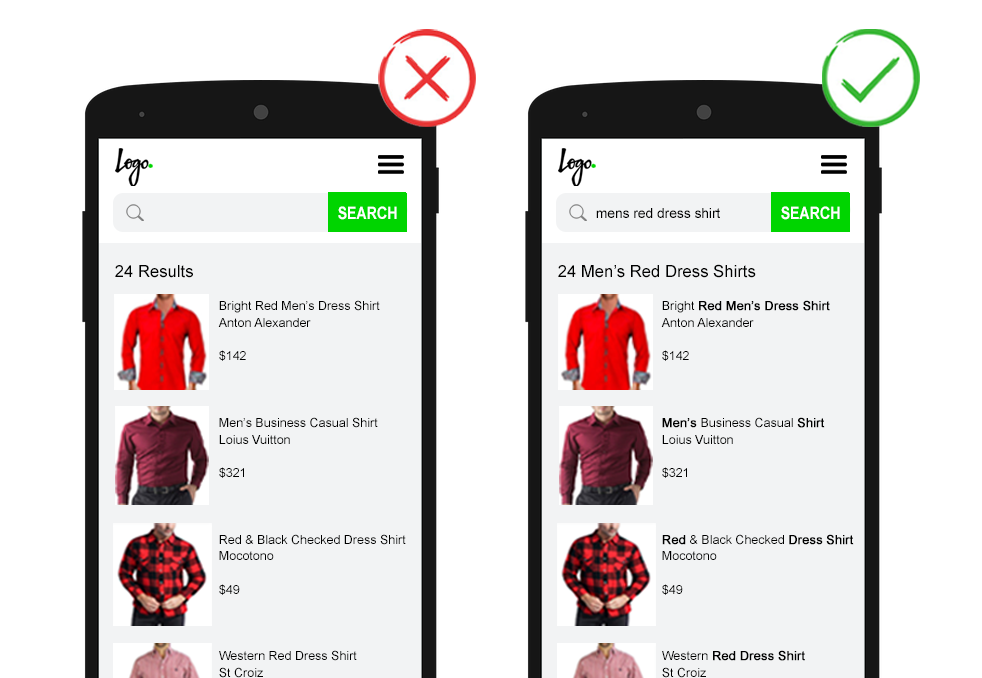

Such autocomplete drop-downs can offer query suggestions, ‘in category’ results and/or show a few pieces of specific content, including images where relevant. For example, if I type “bag”, predictive search can complete my query intent with query suggestions such as “handbags”, “backpacks”, “laptop bags”, “bags for men”, “bag organizer” and so on. As well as show me category options such as “sports”, “fashion” or “travel”. And offer a few select product suggestions. For better readability, use bold to highlight how the user’s typed query relates to the query suggestions.  Some brands choose to have the category options as a drop-down next to the search box input field. While this has been made common practice by ecommerce players, it does add an extra click to the search conversion. Split test this against automated category suggestions, which don’t require additional user action.  For websites offering different content types, consider using iconography or labels in the search suggestions. And finally, for usability, be sure that hitting ‘esc’ closes the search autocomplete and allow keyboard navigation of list suggestions. Internal Search Result Page UsabilityDeliver Search Results Quickly Page load speed is always important, but especially so for on-site search. Google’s research shows that if “search results are slowed by even a fraction of a second, people search less.” If search takes more than one second to process, show a progress indicator or other useful animation to distract the user in the interim. Reiterate the Search Query The visualization of on-site search results can be just as important as the results themselves. It is jarring to be on a brand’s website, do an on-site search, and land on a page that looks like a Google SERP. Search result page should follow a similar style as your category pages. This is likely not a list of SEO page titles, meta descriptions, and URLs. But would include headings, user-focused titles, images, call-to-action buttons, and other rich content details. In such a rich search results page experience, ensure the search query is present in multiple locations.

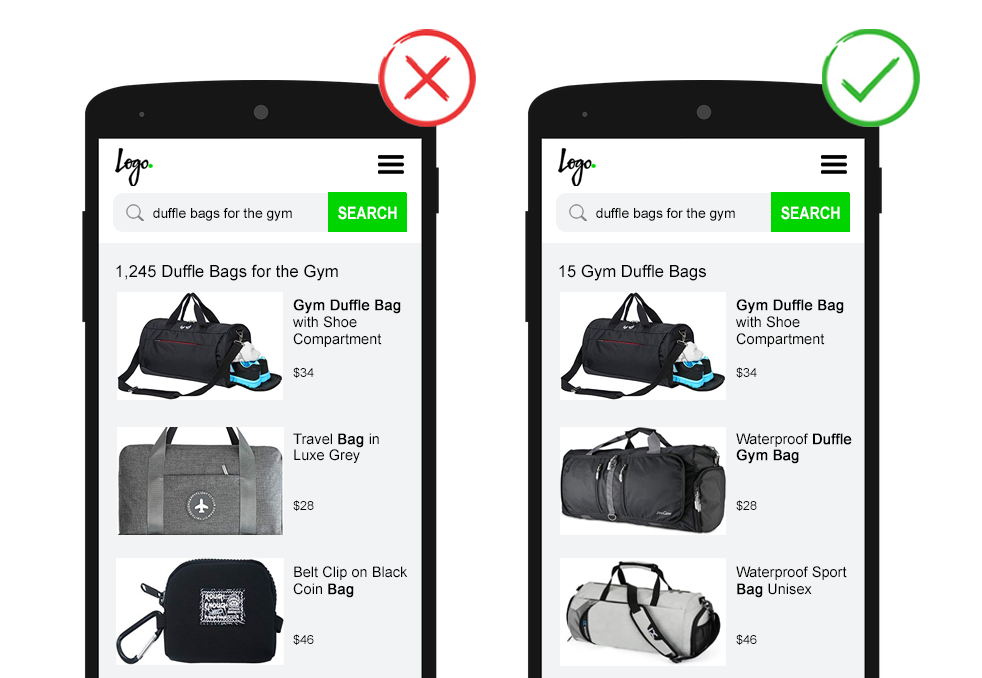

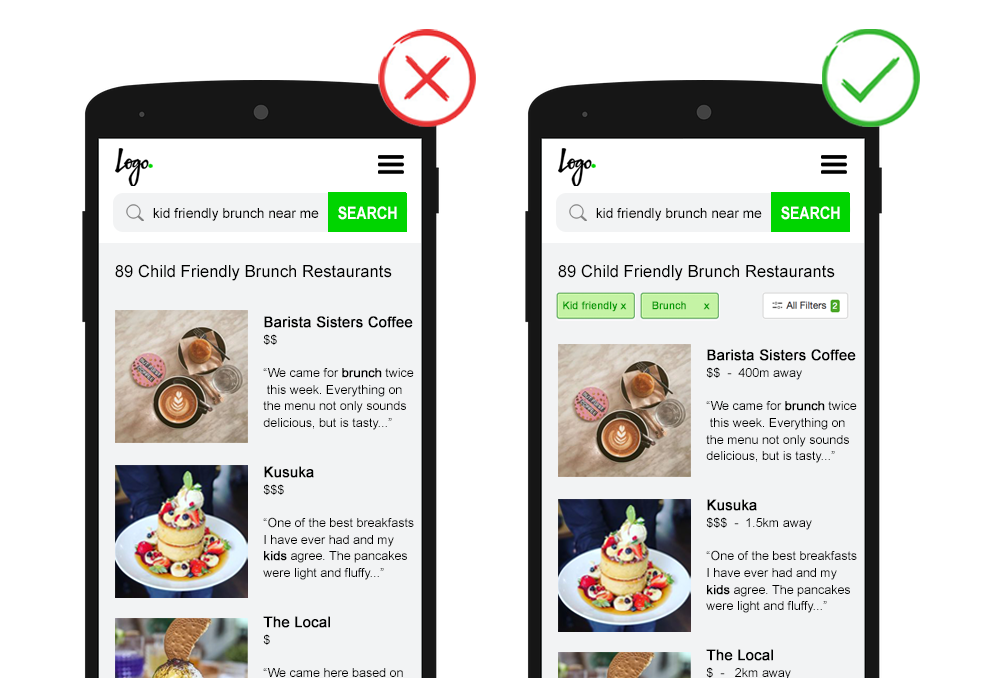

Improve Result Relevancy with Semantic Search How many times have you typed a long-tail query into a search box for half of that query to be seemingly ignored? Returning too many search results can frustrate users, who may begin to question why they even bothered to use the internal search. What’s more, similar to Google SERPs, there’s often a marked drop-off after the first page of results. Despite this, many sites make the mistake of setting their on-site search criteria too broad. It’s of critical importance to display only relevant search results. On-site search devoid of natural language processing treats words in a query as unrelated terms and retrieves results based on basic keyword matching. This method can easily misunderstand the user intent as it analyses the keywords input at face value. The more sophisticated approach of semantic search analyses the context and intent behind the query. Such implementations deliver more relevant results, especially for long or complex queries. For example, the search “duffel bags for the gym” should return only gym duffel bags. Not every item containing the word bag or gym. Especially when the searcher has made the effort to be precise, be respectful and return results that match their complete search intent. Or, at least, show the exact matched results first, followed by a subheading such as “Other items that may interest you” where broad matched results are displayed.  If semantic search is not an option, rather than a free text search box, you can implement constrained search. Fields are populated by set drop-down menus and/or searchable queries are restricted to autocomplete suggestion. This limits visitors to search within the scope of the site architecture and ensures a quality result. However, this also requires more effort on the part of the searcher, more specs in the user interface, and limits user intent analysis on search data. Leverage Search Within Search If a query is broad to begin with, so it returns a multitude of listings, help the searcher refine results by providing relevant filtering (to narrow) and sorting (to organize) options. On the other hand, where the facets are communicated within the search query, an optimized on-site search will break down the search phrase to automatically apply filters and give an easy option for adjustments. For example the search phrase “kid-friendly brunch near me” would apply filters for the attributes “child-friendly” and “brunch” and sort results based on distance. The focus here is, again, relevancy. Remember, every filter option you add is decision load on the user and is potentially another URL using crawl budget. As the searcher may not convert on the first visit, encourage the user to save any multi-faceted searches. Or better, to opt in to receive email updates for new matches. This not only makes it easy for the visitor to re-find engaging content, but as an additional benefit, by offering saved search functionality you encourage the creation of a profile. 9. Leverage the No Results Page What if there are no results to display? Firstly, check if your search function is returning results based on all website content.

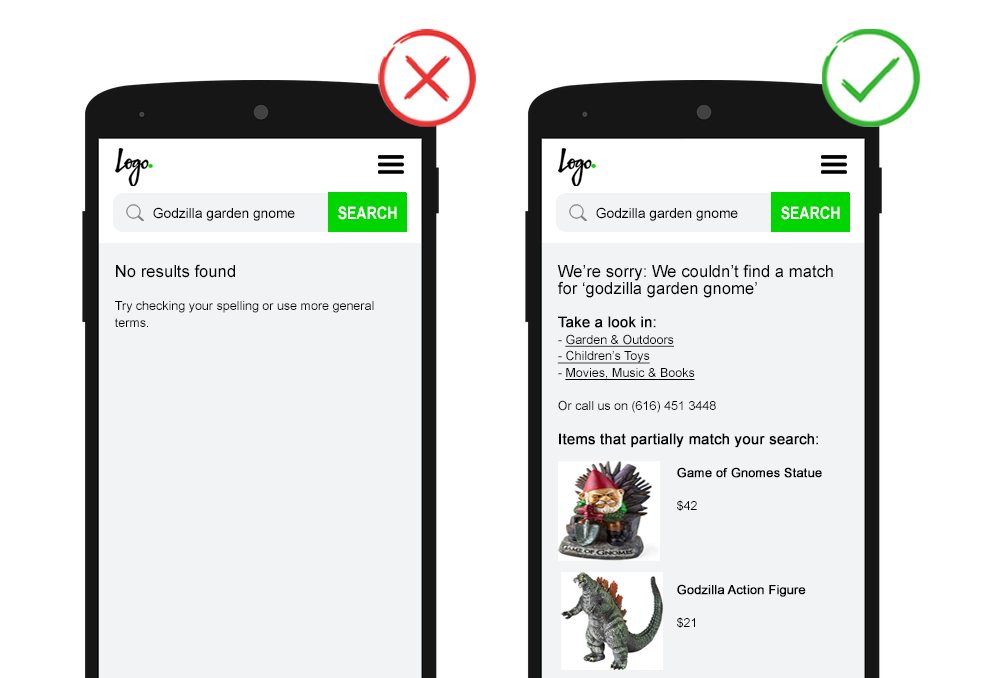

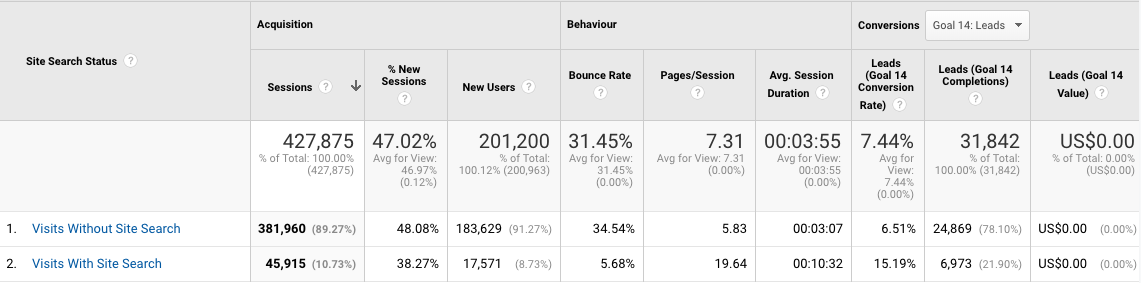

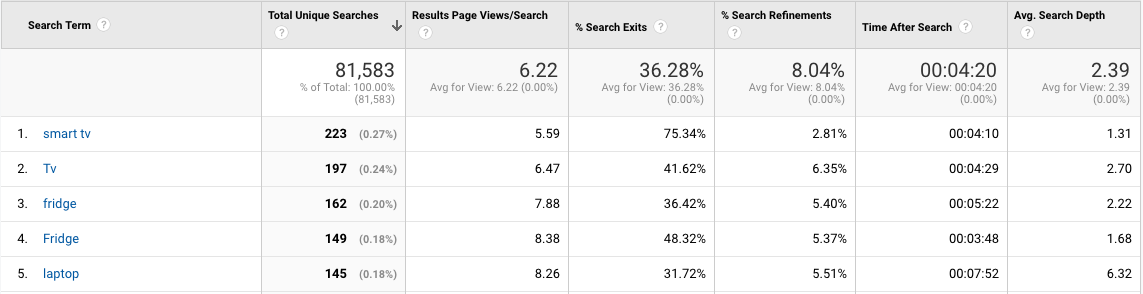

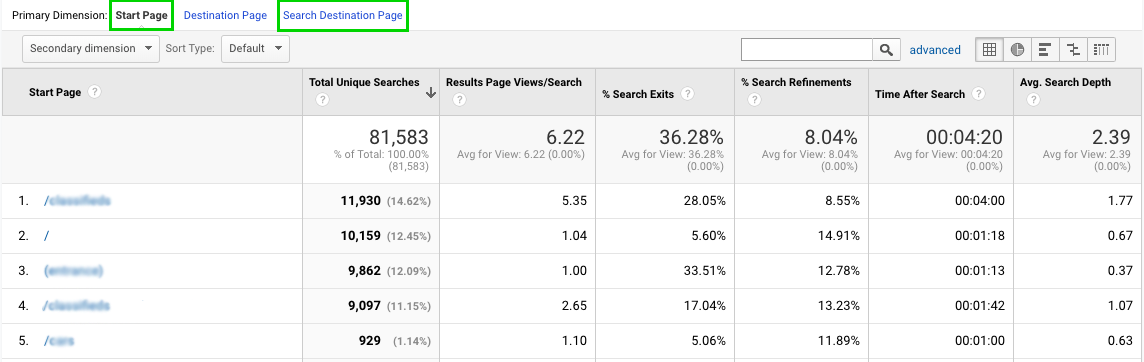

If this is the case and you truly have no relevant content, be sure to handle it more gracefully than the infamous “No results found” message. Or worse blaming the searcher by telling them to “Check your spelling” (on-site search should autocorrect) or “Use more general terms” (on-site search should match against broader content). If visitors believe you don’t have what they’re looking for they will leave. Worse, leave frustrated. Making it less likely they will choose to engage with your brand in the future. Apologetically state there are no results available and propose a valuable next step. This could be in the form of alternative search suggestions, contextual category links, broader matching content, or details to contact support. Some websites choose to show top searches, promote selected content or list all their categories. These options do not recognize the searcher’s intent. As such, are less valuable. However, any next step is better than a dead end. Action Internal Site Search DataOn-site search data holds a wealth of actionable insights for SEO. And it’s incredibly easy to set up in Google Analytics.  The usage report helps you understand to what extent users took advantage of your internal search function and how effectively the search results created deeper engagement with your site. Use these metrics to quantify the potential KPI impact of site search optimization. It is common that visits with site search show significantly lower bounce rates, higher time on site, more pages per session, and more conversions.  The search terms report shows what people are looking for and more importantly if your site is delivering. Use search terms to:

NOTE: For high-quality data, no matter how the query is typed, work with your development team to ensure all search terms are tracked in lower case. Else you will find “Term X” and “term x” splitting data within your reports, such as in the screenshot above.  The search pages report shows where searches began (start page) and the search result page (search destination page). Use search pages to:

In addition to the basic site search reporting, it’s best practice to set up a Google Analytics event to track when the ‘no result’ page is triggered. Example values could be:

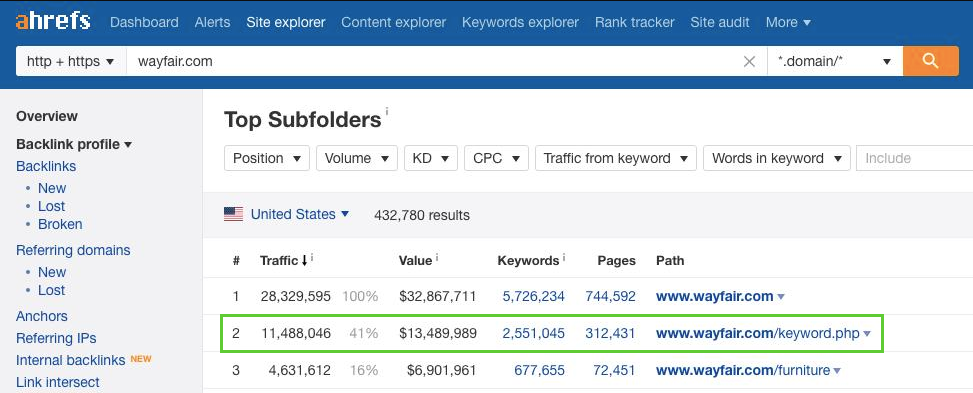

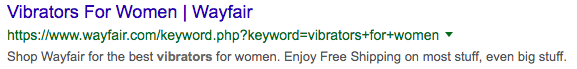

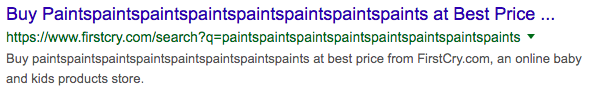

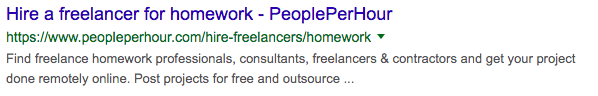

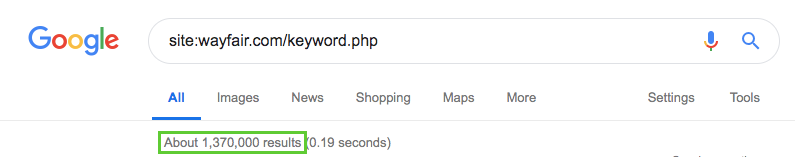

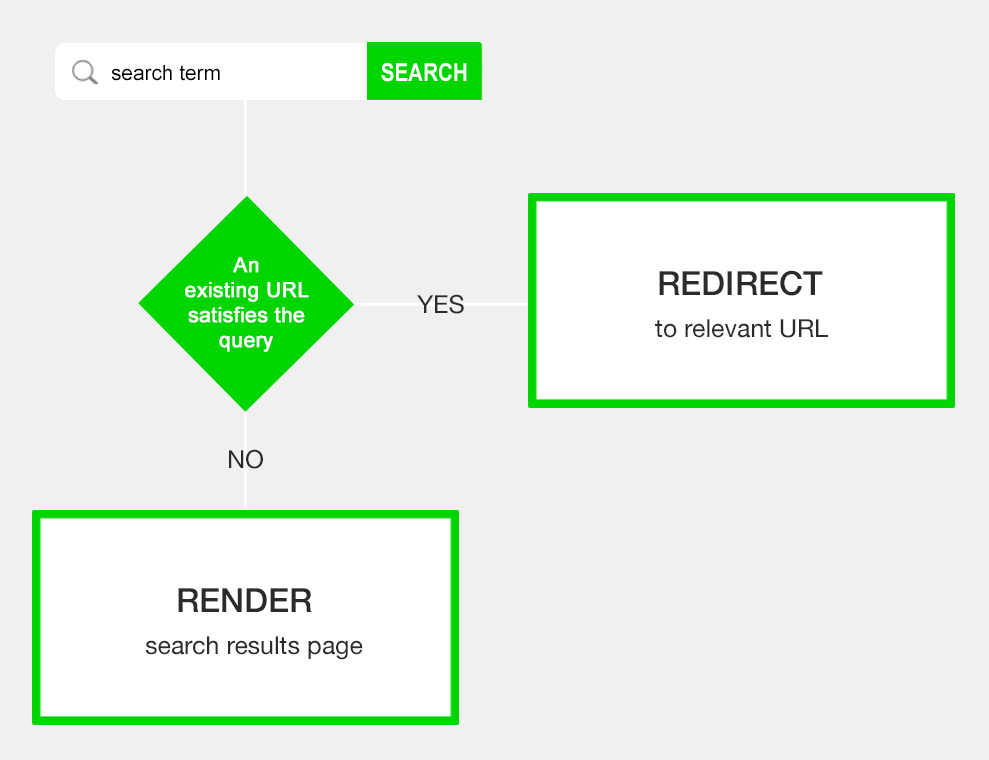

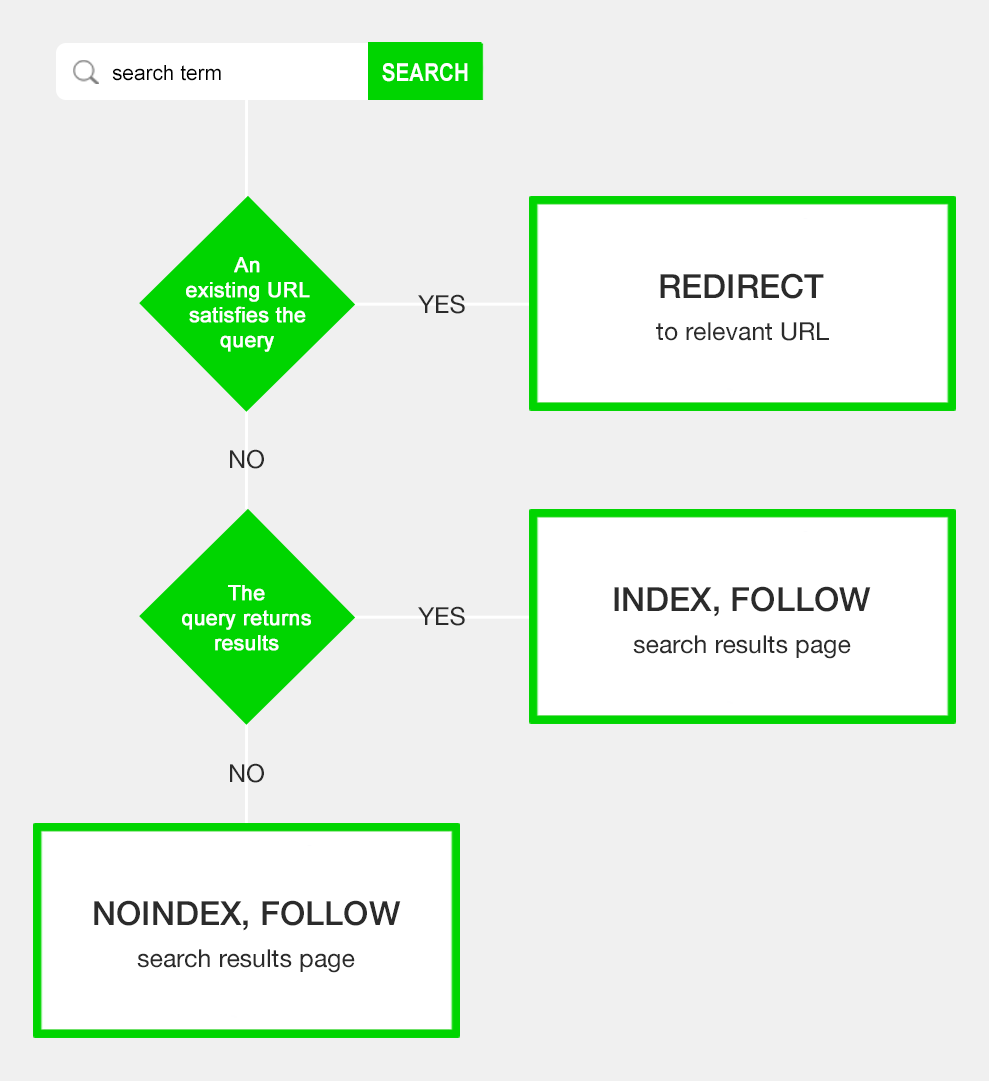

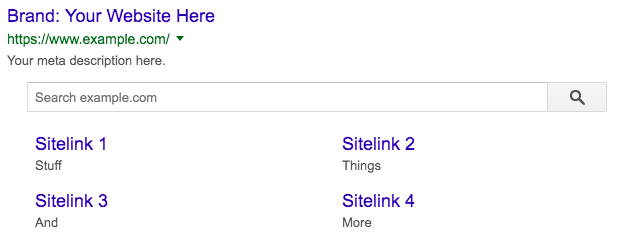

Use this data to identify high volume problem queries and improve searcher experience. SEO Benefits & Risks of On-Site SearchCrawling & Indexing of Internal Site Search Result PagesYou’ve probably read Google doesn’t like to index site search pages. One often repeated source was an article by Matt Cutts in 2007 where he commented, “we’ve seen that users usually don’t want to see search results… in their search results”. So the prevailing SEO approach was to prevent crawling and indexing of site search result pages with effective robots handling. But this is 2019, and you’ve probably seen examples of Google ranking search results pages in search results. There are many case studies whereby giving Googlebot full access to site search result pages has brought in a wealth of organic sessions.  But this doesn’t come without risks. Exposing search results pages to Google opens up your site to generating an indexable page for any keyword. Meaning you can end up with user-generated spam pages. This can create some “interesting” SERPs. Especially with dynamic page titles and meta descriptions. Women being offered free shipping on “big stuff”…  Babies being keen on paints…  Kids getting out of homework…  Of course, these pages are unlikely to be triggered on Google in the wild. The point is that while some relevant internal search pages might make it to the top and bring in users. There’s likely to be an iceberg worth of low-quality pages below the surface dragging down domain quality and using precious crawl budget.  So what’s a site to do? Control Crawl Budget by Using Parameter-Based On-Site Search URLs  For the most part, on-site search result pages are realized by using the GET method as a URL parameter. For example, http://bit.ly/2Wvf3XV SEO professionals who are strong proponents of indexing internal search result pages often encourage the optimization of the search URL by making it a static path. For example: http://bit.ly/2KfqxYV Both these URL structures use crawl budget, unless specified otherwise by robots handling. To avoid this, some internal search implementations use the POST method to remove the search term and render internal search result pages on a single URL. For example, www.example.com/search The obvious downside of this approach is that the URL doesn’t have an opportunity to rank for the search term. What’s more, the search can’t be saved by the user. So which is best for SEO? John Mueller has said that it’s easy for Google to “get lost in the weeds” of internal search pages. That both crawling and low-quality pages could become problems. Although quality “is not always black and white” as there are ways to shape internal search pages to be seen more like a category page. He recommends the parameter approach as it’s easier for Google to recognize it as “something that may vary or as something that a user is submitting”. This allows Google to optimize crawling. In addition, parameter URLs provide flexibility whether you want to search pages to be crawled or not with the click of a dropdown within Google Search Console and Bing Webmaster Tools. Avoid Potential Duplicate Content With URL Redirects  If you have an existing URL that satisfies the search query, redirect the user. For example, if a user searches “blue widgets” and there is a category page for widgets and a filter for colors with blue as an option, send the user to that page. There’s no reason to have both the search result page http://bit.ly/2WBD5jU and the category page http://bit.ly/2TqvRdV as each of these fulfill the same user intent – to see a list of blue widgets. If relevant, also consider redirecting the search queries on an item level, such as when a user searches a specific product SKU. Being taken directly to a content page, rather than a single item search results page, accelerates conversion. From an SEO perspective – Hijacking the search query, whether on the category or item level, limits the total number of search result pages to content the site taxonomy doesn’t already cover. Meaning crawl budget is spent on pages that wouldn’t rank without site search. These redirects also prevent any possibility of duplicate content or risks of a ranking signal being split across pages. Avoid Thin Content Issues by Handling ‘No Result’ Pages  Providing a valuable next step, as advised above, within the user interface already reduces the likelihood of any thin content issues. But as a “no result” page offers limited user value, you may want to add a noindex, follow robots meta tag. This helps prevent user-generated spam pages showing up in Google SERPs and dragging down domain quality. These three SEO actions ensure indexable on-site search pages are limited to queries your website taxonomy doesn’t cover and which offer relevant results – a worthy allocation of crawl budget. Search Box within Google Search Results Having on-site search offers the opportunity to claim more space in search engines. A Sitelinks Searchbox can appear as part of the SERP result, often for high volume branded queries. Tell this search box to use your website’s search functionality within Google to have searchers sent directly to on-site search result pages. Becoming eligible for this feature is relatively easy.

Although, like all such snippets, being eligible doesn’t guarantee the box will appear. On-Site Search Best PracticesToo often, on-site search user experience testing is limited to a quick check that the search box is appearing on desktop, then mobile, and that it renders results when a basic query is entered. But a good looking search box doesn’t mean anything if the results aren’t helpful to the searcher. User experience covers more than the interface alone. It’s the search functionalities interaction with the user – via the form, via search results pages and especially the logic behind the screen. Having no site search function is better than having one that gives searchers a false impression of availability of content on your site. As such, internal search experience testing should be rigorous to ensure results are both relevant and accurate. Understand the state of your current search capabilities with the best practice checklist. Being sure to test on a variety of devices to see ease-of-use and the load speeds. ✓ Search box is a reasonable width and prominently placed on all relevant pages. ✓ Search box effectively prompts user action through iconography, buttons, and placeholder text. ✓ Search box offers predictive search suggestions based on query intent. ✓ Search interface accepts both click and keyboard actions, such as enter to search, esc to close, etc. ✓ Search function accommodates casing, numbers, stop words, misspellings, synonyms, and other variants. ✓ Search function handles empty searches. ✓ Search function returns results for all document types and informational related keywords (e.g., “returns policy”). ✓ Search result pages offer a rich, on-brand experience while clearly reiterating the search query. ✓ Search result pages use semantic search to ensure relevant results for the query intent are shown. ✓ Search result pages offer relevant filters for broad queries, allow the easy removal of any filters for narrow queries. ✓ Search result pages offer to save multi-faceted searches. ✓ No results pages are set to ‘noindex’ and offer a valuable next step. ✓ If parameter-based URLs are used, the parameter is configured in GSC. ✓ A logic of URL redirects is in place to avoid duplicate content of search pages to categories. ✓ Google Analytics site search usage report KPIs are benchmarked on a regular basis and any significant shifts explained with an annotation. ✓ Google Analytics search terms and search pages reports are reviewed on a regular basis for insights into site content and design. ✓ Google Analytics event for the no result pages is in place and report reviewed on a regular basis. ✓ Website homepage is eligible for the Sitelinks Searchbox. Now, ask yourself. Are you leveraging the full potential of on-site search? More Resources: Image Credits Featured & In-Post Images: Created by author, May 2019 SEO via Search Engine Journal http://bit.ly/1QNKwvh May 31, 2019 at 11:03AM

http://bit.ly/2WCr1Pn

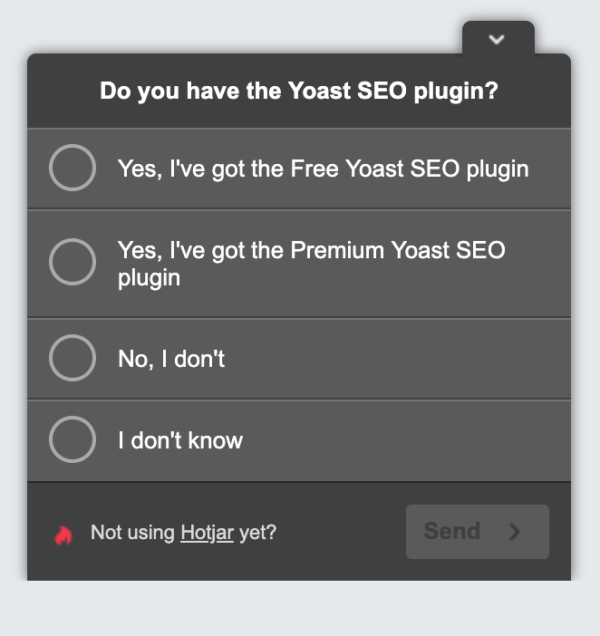

Why and how to investigate the top tasks of your visitors http://bit.ly/2wELh4x At Yoast, we continuously want to improve our website and our products. But how do you find out what makes them better? Sure, we need to fulfill the needs of our clients. But how do you know what your client’s top tasks are? Doing research is the answer! We love doing research because we get valuable insights out of it. Here, we’ll dive into one research type we use regularly: customer surveys and in this case, the top task survey. How do you know what your customers need?When we started working together with AGConsult on the conversion optimization of Yoast.com, they advised doing a top task survey. Research is always the first step in the conversion optimization process and you simply can’t get all relevant information out of plain data from, for instance, Google Analytics. To know why your customers are visiting your website, you need your customers to talk to you. If you think, you now have to start a conversation with all your visitors, don’t worry. Luckily, there are several other ways to make your visitors talk to you. An example is setting up an online top task survey, which will pop up on your visitor’s screen as soon as you want it to pop up. For example, immediately after opening your website or after a couple of minutes. The best question for your top task surveyTo make sure you don’t influence your visitor’s answers, it’s important to ask an open question. By asking closed questions, you make your visitors choose between the answers you set up yourself. Although you can add an ‘other’ field, visitors are more likely to quickly choose a listed answer. That’s easier than putting their own opinion in an open field. So closed questions prevent you from getting to know all your visitor’s thoughts. So, what question should you ask? Within the top task survey we perform on our own website, we always ask this question: ‘What is the purpose of your visit to this website? Please be as specific as possible.’ This pop-up will appear at the bottom right of the website, no matter what the landing page is. The above use of wording encourages visitors to really think about their specific purpose. Also the addition of ‘be as specific as possible’ often results in more valuable answers. You could choose to only add this one question or you could choose to ask one more question to get more knowledge about your customers. For Yoast, within our top task survey, we always ask visitors a second question to tell us if they already use our most important product:

For other companies, it could be valuable to use this second question to get to know the age of visitors, the market they work in, etc. It all depends on what you want to do with the outcomes. If you’re not going to do anything with the answers on the second question, please use only one question in the survey. The fewer questions, the more visitors will participate. What to do with all the answersWhen you end the survey, you probably have lots of answers to go through. How do you start analyzing all these answers? We recommend to just start reading through the answers and try to set up categories while reading. Set up categories that cover lots of answers, don’t be too specific. You’ll need to find a pattern in your visitor’s answers. Only when you do this, you can create actional steps to optimize your website or your products. To give an example, we’ve listed some of our own categories below:

This might give you an idea when setting up your own categories. The second step, after you categorize all answers, is setting up a plan. Now that you know which categories are the most important to your visitors, it’s important to optimize your website using that information. For example, our own top task survey showed us that almost 25% of our visitors are looking for plugin related help. We already had a menu item ‘support’ which linked to our knowledge base, but after the survey, we had the idea of changing the name of the menu item into ‘help’ because lots of visitors named it help. We set up an A/B test, comparing the menu item ‘support’ with the variant ‘help’ in the test. What do you think happened there? ‘Help’ was a winner! This shows again: knowing what your customers are looking for is the most valuable information you can get. How often should you repeat this survey?We believe it’s good to do a top task survey once a year. However, if you don’t change much on your website or in your products, every other year can be enough as well. Every time you analyze the answers of a new top task survey, you get to know if you’re on the right track or if you need to shift your focus towards another product or another part of your website. You can never do too much research! Tools to start an online surveyThere are several free and paid tools out there in which you can create a survey like this. We use Hotjar, but we’re planning to create our own design and implementing it with Google Tag Manager. Other tools we know for setting up online surveys are: On their sites, they have a clear explanation of how to use these tools to perform a top task survey. Have you ever performed a top task survey for your website? Did you find out anything that you didn’t know or what surprised you? Let us know! Read more: Content SEO: How to analyze your audience » The post Why and how to investigate the top tasks of your visitors appeared first on Yoast. SEO via Yoast https://yoast.com May 31, 2019 at 10:50AM

https://selnd.com/2wrvsxV

Google is expanding when it shows ads to “people in targeted locations” https://selnd.com/2YXLBHp Google has been quietly rolling out a change to the location targeting options in Google Ads. The change was first spotted in display campaigns, but now appears to have rolled out for search and shopping campaigns, too. What’s changing? Google has changed the “People in your targeted locations” option to “People in or regularly in your targeted locations.” The old setting of “Reach people in your targeted locations” did not include showing ads to people “who searched for your target locations but whose physical location was outside the target location at the time of searching.”

Source: Google Ads help page prior to the change With this change, instead of showing ads to people only when they are physically located in your targeted locations at the time of their search, it will also include people who regularly commute or travel to your targeted locations even when they aren’t physically there when they perform a search. The idea is your location-targeted campaigns can reach people with ads targeted to their work locations when they are home and vice versa. Why we should care. The change is somewhat subtle, but important to note. It’s another lever of targeting control getting supplanted by machine learning. Andrea Cruz, digital marketing manager at KoMarketing Associates, first alerted us to the change when she noticed it in display campaigns in mid-May. “This makes sense if you are targeting commuters,” said Cruz. But, as she notes, Google doesn’t tell us what the criteria is for someone to be considered regularly located in your target area. The frequency and recency factors could be make a difference to some businesses. There is no reporting that shows advertisers volume or performance breakouts by “people in” versus “regularly but not currently in”. “It would be great to have both options,” said Cruz. “Keep people who are in your targeted location, and as separate option, people who are regularly in your targeted locations.” Digital marketing consultant and CEO of SEMCopilot Ted Ives noticed the change this week. “Although my default reaction to these sorts of changes is to be skeptical,” he said, “I do think in this case it will result in incremental high quality traffic coming in for advertisers. We live in a mobile world…that means people move around a lot; people we target during the day don’t disappear at night, they go home. Why not get your message in front of them there? So, I think this is a helpful change in the paradigm; probably even an overdue one.” About The AuthorGinny Marvin is Third Door Media's Editor-in-Chief, managing day-to-day editorial operations across all of our publications. Ginny writes about paid online marketing topics including paid search, paid social, display and retargeting for Search Engine Land, Marketing Land and MarTech Today. With more than 15 years of marketing experience, she has held both in-house and agency management positions. She can be found on Twitter as @ginnymarvin. SEO via Search Engine Land https://selnd.com/1BDlNnc May 31, 2019 at 10:27AM

http://bit.ly/2W0EMmJ

Attacked by Negative SEO? Lost Rankings? Read This via @martinibuster http://bit.ly/2ww5oSe  Someone asked me about using the disavow tool to combat negative SEO and regain lost rankings. The following is a detailed explanation of what is typically involved with finding a solution for a negative SEO attack. Disavow Tool of Limited UsefulnessJohn Mueller posted in Reddit that many inside Google feel that the disavow tool is not necessary. The reason is because they feel that Google’s already discounting spammy or irrelevant links. The tool isn’t contributing to a solution since the links are already being ignored. That’s also the reason why Google purposely makes the tool hard to find, because they feel it’s not necessary. John Mueller said that explicitly, that Google purposely makes the disavow tool difficult to find. Google actively discourages the use of the disavow tool and the ONLY reason it exists is because the SEO community BEGGED Google for a disavow tool. Google resisted offering a disavow tool, but after several months relented and offered it. The tool is not something Google forced on SEOs. The tool is something SEOs begged Google to provide. As it stands, Googlers have repeatedly stated that the proper use of the disavow tool is when you know you have bad links, as in you’re responsible for them. Googlers do not encourage publishers to use the disavow tool to fight negative SEO. Why would they? Googler’s don’t even believe in negative SEO. References: Google’s John Mueller on How to Use Disavow Tool – Two More Times Google Discourages Use of Disavow Tool. Unless You Know the Bad Links Googler Gary Illyes Has Never Seen a Real Case of Negative SEOI recently wrote this (citation below):

References: Google’s John Mueller on Disavow Tool – FULL TRANSCRIPT How Negative SEO Shaped Disavow Tool Do Link Related Penalties Exist?Yes, link related penalties still exist. But no, random low quality scraper links don’t cause penalties. Google is ignoring low quality links. Link related manual actions are real and they are still happening. It “seems” like there have been a lot of link related manual actions handed out from March through April 2019. There was quite a bit of chatter about those in Google Webmaster Forums, as well as publishers coming to me for help in removing those penalties. I believe that understanding link distance ranking algorithms could help people better understand why Google is so confident about their ability to neutralize low quality links. Link distance ranking algorithms are among the newest techniques for analyzing links in a search engine. Google and other researchers have published research papers and patents about it. Reading about these algorithms may help publishers gain an understanding of why Google is so confident about being able to neutralize the influence of low quality links. Reference: Is Negative SEO Real?I believe that what some people regard as negative SEO is not really negative SEO. Many sites accumulate low quality links, including adult type links. It’s a normal pattern on the web. Spammers (and white hat SEOs) believe that linking out to high quality sites will help their sites appear less spammy. But if you have just a little understanding of link analysis, then you’ll know that the search engines are not only two steps ahead of that practice, they’re actually about a thousand miles ahead. Is it possible to negative SEO? I believe it is possible, but not in the way that people currently think it’s done. I don’t dare share any more details than that. I believe that negative SEO is a convenient scapegoat to avoid acknowledging problems with site itself. Many have approached me about negative SEO that could not be resolved through the disavow tool. A review has often revealed that the problem was within the site and not due to negative SEO. Scapegoats and Red HerringsI’ve been approached by people who claim to be affected by Negative SEO and upload huge disavow lists every month. Yet they never, never find relief, their rankings never improve. That’s like rubbing olive oil on your broken arm with the belief that if you keep on rubbing it just a little more the arm will heal. But it never heals because rubbing it does nothing. Everybody’s baby is beautiful and well behaved to the parent. The baby is perceived differently by everybody else. The real problem affecting the site tends to become more evident to someone looking at the site from the outside. Negative SEO: The TakeawayIf your disavows aren’t working, if your rankings aren’t returning, then you should stop and consider that the real problem is something else. If disavowing low quality links does not work, the solution is to acknowledge that the problem lies elsewhere. The real problem is likely in the website itself, not outside of it. Acknowledging this reality is to take the first step toward correcting the real problem that’s affecting your rankings. SEO via Search Engine Journal http://bit.ly/1QNKwvh May 31, 2019 at 05:03AM

http://bit.ly/2YWtIJ9

Google to No Longer Display Text-Only AdSense Ads via @MattGSouthern http://bit.ly/2KfQAPR  In an effort to modernize its advertising products, Google is retiring the text-only AdSense ad unit. Google notified AdSense publishers about this change via email:

Google AdSense will automatically rename existing “Text ads only” and “Display ads only” to simply “Display ads.” In addition to sunsetting text-only ads, Google announced several other changes to AdSense ads which are rolling out in the coming weeks. Here’s a summary of everything that was announced in the email:

Google notes that it has been investing heavily in understanding the best ways to increase user interest in ads. Presumably, these changes are designed to make ads more appealing to users, which may then results in more clicks and earnings for AdSense publishers. SEO via Search Engine Journal http://bit.ly/1QNKwvh May 31, 2019 at 01:32AM |

Categories

All

Archives

November 2020

|

RSS Feed

RSS Feed