|

http://bit.ly/2GaBYPw

What Digital Marketers Need to Know About Cookies & Tracking by @rollerblader http://bit.ly/2CUV0q0 At one point in time, the cookie was the gold standard of tracking digital marketing efforts. Flash forward even just a few years, the cookie began to date itself as attribution and multi-channel tracking became a thing. Move forward to smartphones and, although cookies are still useful, a cookie can’t track across devices. So can you still use them? Are they worth anything? Yes, but you need to know how to use your data first. This post will walk you through cookies across channels like affiliate, social media, email, and PPC and give you examples of when it can be trusted, when it can’t, and some possible alternatives for you. By reading closely and running some of the tests, you can also make your company more profitable for a double win with the attribution and channel tests below. Why Cookies Are Less ReliableConsumers and Internet users became scared of “privacy” breaches and “being tracked” almost overnight. With demand comes a market and that turned into a few things.

This put the first dent in the dependability of cookies and tracking. Next, you have last click attribution being a preferred tracking method (and for companies that really don’t understand data, first click). The problem with last click attribution is that you don’t understand the entire journey of the customer, and, in some cases, you cause financial damage to your company. A Sample Customer JourneyHere’s an example I still see today that impacts how a company allocates their marketing budget and why they’re spending in the wrong places:

In this case, it looks like affiliates are a high volume channel because they’re taking credit for a lot of sales, but in reality, affiliate just poached the sale last minute. You can tell this if the affiliate site has incredibly high conversion rates and really short click to close time frames. Many companies would assume spend more in affiliate because of last click attribution, but that is probably the wrong choice. Without paying the commission to the affiliate who did not refer the sale, the PPC phrase that is borderline profitable is now more profitable. But if you use last click, you won’t know this and you may shut that phrase or ad group off. This causes damage to your company because you no longer have those customers coming in from the PPC term that was profitable, and it is because you did not have proper tracking and attribution in place. But what if we add another step in, maybe the person couldn’t get back to their computer and instead shops at home? Now, none of the cookies are set. The influencer won’t get their click, the coupon affiliate cannot intercept at checkout, and PPC which introduced the new customer will never get credit. If you’re asking why, it’s simple. The journey started and the cookies carried in all of these channels, but because the device changed there are no more cookies assigned to the customer. The same goes if you send an email and the click happens on a mobile phone while the customer is at lunch. They get back to their desk and because there are a lot of fields making it hard to shop on a mobile phone, they checkout on their computer instead meaning the cookie is no longer applied to them. Same with shopping from a website on their phone and purchasing in an app. This is where tracking gets fun. Tracking Without CookiesYou need to have a multi-device tracking platform installed or database-driven solutions, and that takes creativity, programming, and solid data analysis. Here are four ways you can track without cookies and across devices. 1. Capture the Email Address & NameIf you can capture the email address and the name of the person, you can store this in a database and tie it to the referring channel at and post checkout. Although it’s not perfect, if a cookie gets wiped, you can run the email addresses used at conversion back to the database and see where it was referred from. 2. Have the Person Login or Create an AccountTry to find a way to get the person to create an account or log in using a social media or Gmail account. If the initial parameter has a UTM source of PPC and there is an affiliate later on, because it’s a logged in user you can keep track of the touchpoints for a better attribution line. There are tools built specifically for this type of tracking that I use with my clients. Now you can start to view each of the touchpoints and begin testing which channels to keep or leave based on conversion rates dropping and total revenue going up or down. Maybe you remove influencers and see that revenue drop without this touchpoint and/or total conversion rates decrease. That means the influencer played a part in the closing of the sale and you should keep it going and try to expand. 3. Log the IP Address & Cookie of the UserIf you notice the same IP logged on from two separate devices around the same time and in the same categories or product mixes, there may be a way for you to group that into the same user. If you can tag that user as the same customer, you can now get the same as above, but have another level of data to show what is working and what is not. 4. Capture a Full Lead via a Free Trial or Promise of a DiscountIf you remove the coupon site that shows up for your brand + coupons in Google like in the example above, revenue may go up by the amount of the commission and affiliate network fee. However, double check your conversion rates and total sales for the store (not for the affiliate channel) and make sure they stay the same or similar. If they do, the coupon site may not have impacted the sale and your company can be more profitable by not having the ones intercepting your shopping cart in your affiliate program. This is just an example as a general statement, you need to test everything for yourself. Bonus tip: The best solution I’ve found if you find coupon websites poaching your shopping cart and/or using affiliate links is to rank your own site for your URL + coupons in Google. By replacing the current sites showing up for that term in all of the 1 – 5 positions with non-affiliate sites you can control the codes on the sites and you don’t have to pay commissions, your customer support team to handle complaints because of non-working codes or affiliate network fees. ConclusionCookies are still important and are a standard, but with a continuing demand for less tracking and less cookies like Europe’s GDPR and Apple’s ITP compliance blocking third-party cookies, you must adjust and keep up with the times. The good news is that if you have someone who is good with data and attribution and can also come up with creative solutions, you will no longer be cookie dependent and can begin to scale your company with real tracking, attribution and hopefully become more profitable. Now I’m off to go buy a cookie. Wow, this post made me hungry! More Resources: Subscribe to SEJGet our daily newsletter from SEJ's Founder Loren Baker about the latest news in the industry! SEO via Search Engine Journal http://bit.ly/1QNKwvh January 30, 2019 at 07:14AM

0 Comments

Social Media Icons Return To Google Knowledge Panels http://bit.ly/2TqKaPG Some SEOs are noticing that Google has brought back the social media icons in the knowledge panels for both personalities and local businesses. I personally didn't notice they ever were gone but I guess they were. SEO via Search Engine Roundtable http://bit.ly/1sYxUD0 January 30, 2019 at 06:58AM

http://bit.ly/2Ur36ha

Google Ads Bug Shows Too Many Ads; It Is Resolved http://bit.ly/2sXDAoa We've seen it numerous times, where a bug leads Google to either show too many Google Ads in the search results or the organic listings do not load and Google only shows ads. It is rare, but we see it every now and then. Google recently confirmed a bug where they showed too many ads in the mobile results. SEO via Search Engine Roundtable http://bit.ly/1sYxUD0 January 30, 2019 at 06:41AM Google AMP Crawl Error Bug Fix Is Here http://bit.ly/2sZBGDb We've been tracking the big AMP crawl error and AMP issues in search over the past week. Yesterday Google confirmed it was a bug on their end and said they would fix it. Today they documented the issue on their data anomalies page and RankRanger is showing AMP content coming back in the search results this morning. SEO via Search Engine Roundtable http://bit.ly/1sYxUD0 January 30, 2019 at 06:32AM

http://bit.ly/2sWty6E

Now Google My Business Bulk Users Can Download/Upload Spreadsheets http://bit.ly/2MHDJos Kara from Google announced a small update in the Google My Business Help forums saying "Now, bulk users can download and upload their locations to and from spreadsheets." "This feature enables bulk users to easily manage and organize their multiple locations... SEO via Search Engine Roundtable http://bit.ly/1sYxUD0 January 30, 2019 at 06:22AM

http://bit.ly/2RnKdtr

Facebook Privacy Updates and Interest-Based Ad Model by @martinibuster http://bit.ly/2Utv8bT  Facebook announced that it is creating an independent board for reviewing appeals of Facebook’s content policy. The board is intended to be transparent about it’s decisions and can overrule Facebook decisions. Facebook is also hiring the lawyers of it’s top privacy critics. All this is happening as the FTC is considering new rules on Internet privacy and the Supreme Court has agreed to adjudicate whether social media companies can regulate speech. Facebook’s interest based advertising model may be caught in the middle. Facebook announced a series of meetings around the world to receive input into the creation of this new board. According to the draft charter:

Facebook Hires Privacy CriticsIn a privacy related move, Facebook hired Nate Cardozo, a Senior Information Security Counsel for the Electronic Frontier Foundation, a free speech organization. Nate Cardozo has played a role in advocating for better privacy policies at Facebook from his position at the free speech and privacy advocacy group, Electronic Frontier Foundation. Facebook also hired Robyn Greene, the senior policy counsel and government affairs lead of the Open Technology Group which is dedicated to consumer Internet privacy and security. FTC Judgment for Violating Privacy AgreementAll of this comes as the FTC is reported to issue a multi-million dollar fine to punish Facebook for violating an agreement to improve it’s security policies. Privacy is Related to AdvertisingThe whole reason privacy is an issue is because Facebook monetizes the personal data of its members. Restricting Facebook’s access to this information would transform it’s advertising platform to a less lucrative banner advertising model. It may be that protecting this advertising model is behind Facebook’s scramble to beef up it’s privacy protections. Better to self-regulate than be regulated from without. The Internet Advertising Bureau (IAB) submitted comments to the Federal Trade Commission in December 2018 to urge moderation in the FTC’s hearing on competition and consumer protection. The IAB seeks to guide the FTC’s decision on how to balance the needs of consumer privacy versus “economic development” and “innovation” by the advertising community. The IAB suggests that “data-drive and ad-supported ecosystem benefits consumers and fuels economic growth.” It also states that current laws should be updated rather than follow European privacy trends (GDPR) as well as privacy laws from the states, possibly a reference to the California Consumer Privacy Act that is set to take effect in 2020 Interest Based AdvertisingMany people are unaware of how their data is being used. There is an assumption of privacy that causes concern when it’s understood how their data is being used. Interest based (and behavioral) targeting is under scrutiny because of privacy protection laws in Europe and one in California that is due to come into effect in 2020. If the Federal Trade Commission issues restrictive guidelines, this could mean a change to the advertising ecosystem that Facebook and Google depend upon. This may explain why Facebook is hiring the top privacy lawyers of their fiercest critics and creating a non-judicial way to adjudicate freedom of speech matters. Subscribe to SEJGet our daily newsletter from SEJ's Founder Loren Baker about the latest news in the industry! SEO via Search Engine Journal http://bit.ly/1QNKwvh January 30, 2019 at 04:24AM

http://bit.ly/2WwHKkb

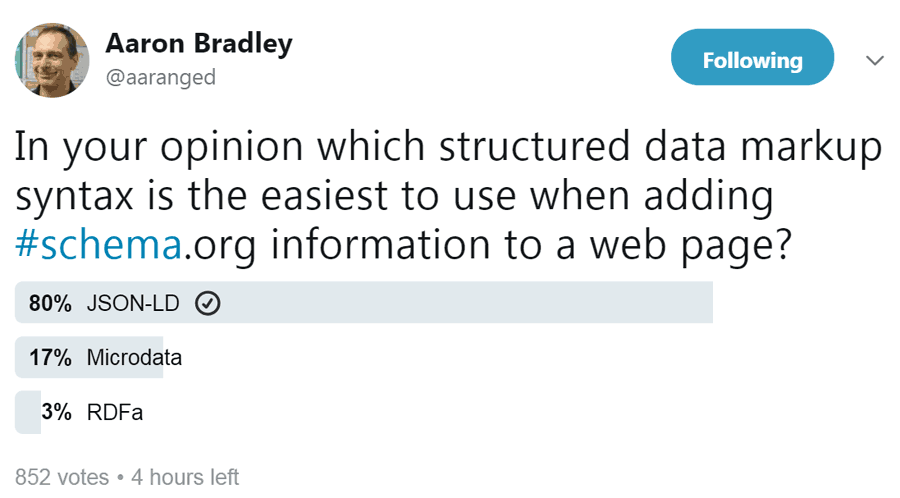

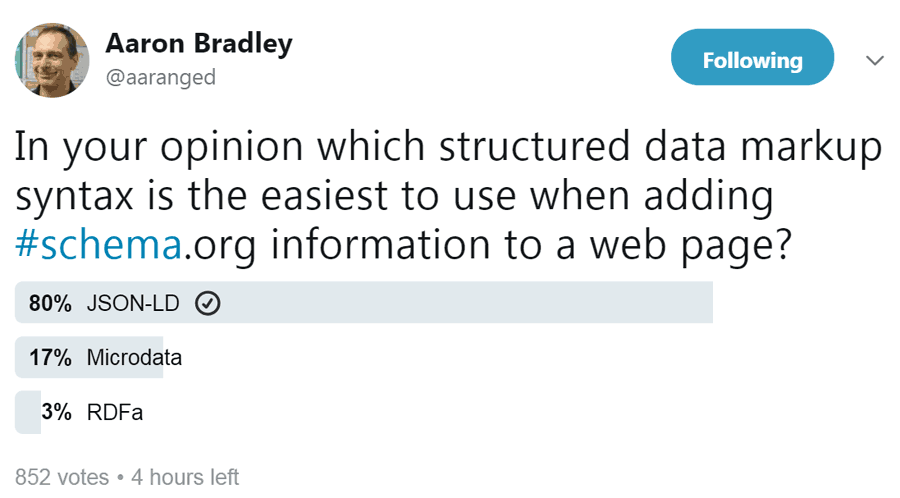

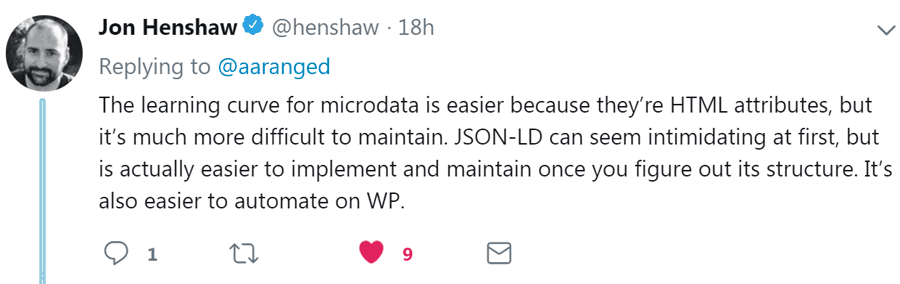

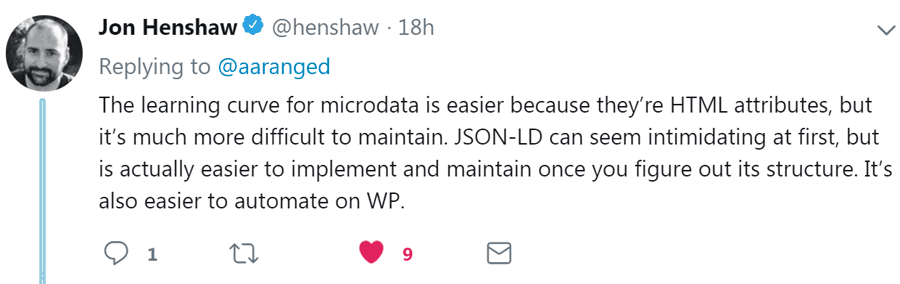

What is the Easiest Structured Data? by @martinibuster http://bit.ly/2DHoJo3 A poll on Twitter sought to answer the question, what is the best form of structured data? The two popular forms schema markup. One that marks up the HTML. The other is a script (JSON-LD) that can be dropped in like a meta tag or a JavaScript. What is the Easiest Structured Data?By far, the most popular form of structured data was JSON-LD. What makes it easy to use is that it can be easily scaled.   A poll on Twitter sought to answer which kind of structured data was easiest? A poll on Twitter sought to answer which kind of structured data was easiest?

If you’re using a PHP based CMS, you can easily use PHP code fragments to insert whatever you want into a JSON-LD structured data template. This makes it easy to integrate into WordPress or any other PHP based CMS. A downside is that it is a new language to learn. However, unlike other computing and markup languages, there aren’t a lot of rules to memorize. Once you understand the basic rules as well as Google’s Structured Data Guidelines, almost anything is possible. Despite those advantages, one Twitter user shared that in their opinion JSON-LD is difficult to scale and that they preferred marking up the HTML code using Schema microdata:

Another person stated the opposite, that adding markup to HTML is more difficult than adding a JSON-LD script to a page.

John Henshaw observed that both ways had their benefits and drawbacks.

I agree with that assessment. While JSON-LD is a little more difficult to understand than the microdata, in my opinion it’s less difficult to learn than HTML. And the benefit of JSON-LD is that it’s easier to scale. More ResourcesImages by Shutterstock, Modified by Author

Subscribe to SEJGet our daily newsletter from SEJ's Founder Loren Baker about the latest news in the industry! SEO via Search Engine Journal http://bit.ly/1QNKwvh January 30, 2019 at 04:24AM

http://bit.ly/2Rtdvap

10 Ways to Think Like Googlebot & Boost Your Technical SEO by @seocounseling http://bit.ly/2Utv4Jb Have you found yourself hitting a plateau with your organic growth? High-quality content and links will take you far in SEO, but you should never overlook the power of technical SEO. One of the most important skills to learn for 2019 is how to use technical SEO to think like Googlebot. Before we dive into the fun stuff, it’s important to understand what Googlebot is, how it works, and why we need to understand it. What Is Googlebot?Googlebot is a web crawler (a.k.a., robot or spider) that scrapes data from webpages. Googlebot is just one of many web crawlers. Every search engine has their own branded spider. In the SEO world, we refer to these branded bot names as “user agents.” We will get into user agents later, but for now, just understand that we’re referring to user agents as a specific web crawling bot. Some of the most common user agents include:

How Does Googlebot Work?We can’t start to optimize for Googlebot until we understand how it discovers, reads, and ranks webpages. How Google’s Crawler Discovers WebpagesShort answer: Links, sitemaps, and fetch requests. Long answer: The fastest way for you to get Google to crawl your site is to create a new property in Search Console and submit your sitemap. However, that’s not the whole picture. While sitemaps are a great way to get Google to crawl your website, this does not account for PageRank. Internal linking is a recommended method for telling Google which pages are related and hold value. There are many great articles published across the web about page rank and internal linking, so I won’t get into it now. Google can also discover your webpages from Google My Business listings, directories, and links from other websites. This is a simplified version of how Googlebot works. To learn more, you can read Google’s documentation on their crawler. How Googlebot Reads WebpagesGoogle has come a long way with their site rendering. The goal of Googlebot is to render a webpage the same way a user would see it. To test how Google views your page, check out the Fetch and Render tool in Search Console. This will give you a Googlebot view vs. User view. This is useful for finding out how Googlebot views your webpages. Technical Ranking FactorsJust like traditional SEO, there is no silver bullet to technical SEO. All 200+ ranking factors are important! If you’re a technical SEO professional thinking about the future of SEO, then the biggest ranking factors to pay attention to revolve around user experience. Why Should We Think Like Googlebot?When Google tells us to make a great site, they mean it. Yes, that’s a vague statement from Google, but at the same time, it’s very accurate. If you can satisfy users with an intuitive and helpful website, while also appeasing Googlebot’s requirements, you may experience more organic growth. User Experience vs. Crawler ExperienceWhen creating a website, who are you looking to satisfy? Users or Googlebot? Short answer: Both! Long answer: This is a hot debate that can cause tension between UX designers, web developers, and SEO pros. However, this is also an opportunity for us to work together to better understand the balance between user experience and crawler experience. UX designers typically have users’ best interest in mind, while SEO professionals are looking to satisfy Google. In the middle, we have web developers trying to create the best of both worlds. As SEO professionals, we need to learn the importance of each area of the web experience. Yes, we should be optimizing for the best user experience. However, we should also optimize our websites for Googlebot (and other search engines). Luckily, Google is very user-focused. Most modern SEO tactics are focused at providing a good user experience. The following 10 Googlebot optimization tips should help you win over your UX designer and web developer at the same time. 1. Robots.txtThe robots.txt is a text file that is placed in the root directory of a website. These are one of the first things that Googlebot looks for when crawling a site. It’s highly recommended to add a robots.txt to your site and include a link to your sitemap.xml. There are many ways to optimize your robots.txt file, but it’s important to take caution doing so. A developer might accidentally leave a sitewide disallow in robots.text, blocking all search engines from crawling sites when moving a dev site to the live site. Even after this is corrected, it could take a few weeks for organic traffic and rankings to return. There are many tips and tutorials on how to optimize your robots.txt file. Do your research before attempting to edit your file. Don’t forget to track your results! 2. Sitemap.xmlSitemaps are a key method for Googlebot to find pages on your website and are considered an important ranking factor. Here are a few sitemap optimization tips:

3. Site SpeedThe quickness of loading has become one of the most important ranking factors, especially for mobile devices. If your site’s load speed is too slow, Googlebot may lower your rankings. An easy way to find out if Googlebot thinks your website loads too slow is to test your site speed with any of the free tools out there. Many of these tools will provide recommendations that you can send to your developers. 4. SchemaAdding structured data to your website can help Googlebot better understand the context of your individual web pages and website as a whole. However, it’s important that you follow Google’s guidelines. For efficiency, it’s recommended that your use JSON-LD to implement structured data markup. Google has even noted that JSON-LD is their preferred markup language. 5. CanonicalizationA big problem for large sites, especially ecommerce, is the issue of duplicate webpages. There are many practical reasons to have duplicate webpages, such as different language pages. If you’re running a site with duplicate pages, it’s crucial that you identify your preferred webpage with a canonical tag and hreflang attribute. 6. URL TaxonomyHaving a clean and defined URL structure has shown to lead to higher rankings and improve user experience. Setting parent pages allows Googlebot to better understand the relationship of each page. However, if you have pages that are fairly old and ranking well, Google’s John Mueller does not recommend changing the URL. Clean URL taxonomy is really something that needs to be established from the beginning of the site’s development. If you absolutely believe that optimizing your URLs will help your site, make sure you set up proper 301 redirects and update your sitemap.xml. 7. JavaScript LoadingWhile static HTML pages are arguably easier to rank, JavaScript allows websites to provide more creative user experiences through dynamic rending. In 2018, Google placed a lot of resources toward improving JavaScript rendering. In a recent Q&A session with John Mueller, Mueller stated that Google plans to continue focusing on JavaScript rendering in 2019. If your site relies heavily on dynamic rendering via JavaScript, make sure your developers are following Google’s recommendations on best practices. 8. ImagesGoogle has been hinting at the importance of image optimization for a long time, but has been speaking more about it in recent months. Optimizing images can help Googlebot contextualize how your images related and enhance your content. If you’re looking into some quick wins on optimizing your images, I recommend:

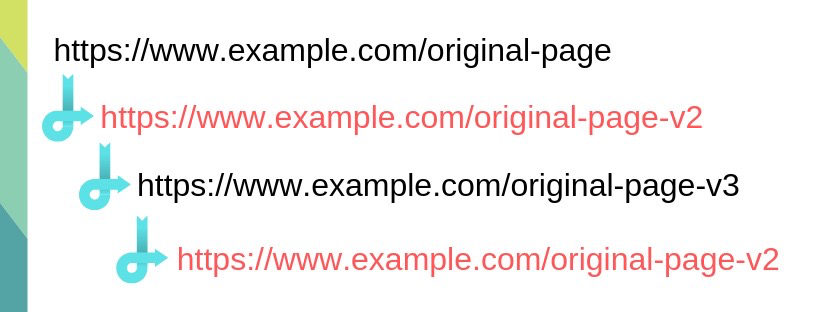

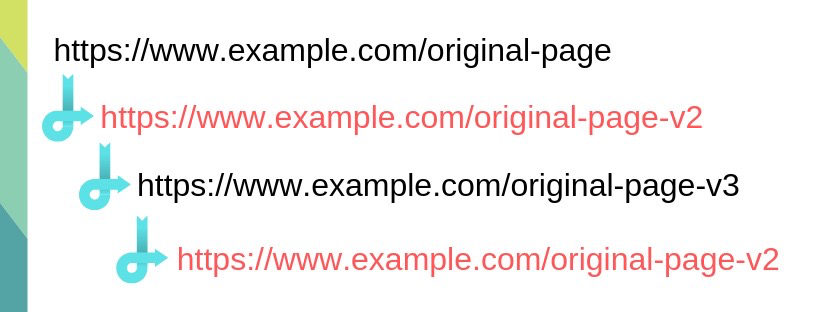

9. Broken Links & Redirect LoopsWe all know broken links are bad, and some SEOs have claimed that broken links can waste crawl budget. However, John Mueller has stated that broken links do not reduce crawl budget. With a mix of information, I believe that we should play it safe, and clean all broken links. Check Google Search Console or your favorite crawling tool to find broken links on your site! Redirect loops are another phenomenon that is common with older sites. A redirect loop occurs when there are multiple steps within a redirect command.   Version 3 of the redirect chain redirects back to the previous page (v2), which continues to redirect back to version 3, which causes the redirect loop. Version 3 of the redirect chain redirects back to the previous page (v2), which continues to redirect back to version 3, which causes the redirect loop.

Search engines often have a hard time crawling redirect loops and can potentially end the crawl. The best action to take here is to replace the original link on each page with the final link. 10. Titles & Meta DescriptionsThis may be a bit old hat for many SEO professionals, but it’s proven that having well optimized titles and meta descriptions can lead to higher rankings and CTR in the SERP. Yes, this is part of the fundamentals of SEO, but it’s still worth including because Googlebot does read this. There are many theories about best practice for writing these, but my recommendations are pretty simple:

SummaryWhen it comes to technical SEO and optimizing for Googlebot, there are many things to pay attention to. Many of it requires research, and I recommend asking your colleagues about their experience before implementing changes to your site. While trailblazing new tactics is exciting, it has the potential to lead to a drop in organic traffic. A good rule of thumb is to test these tactics by waiting a few weeks between changes. This will allow Googlebot to have some time to understand sitewide changes and better categorize your site within the index. Image Credits Screenshot taken by author, January 2019 Subscribe to SEJGet our daily newsletter from SEJ's Founder Loren Baker about the latest news in the industry! SEO via Search Engine Journal http://bit.ly/1QNKwvh January 30, 2019 at 04:24AM

http://bit.ly/2CWpLuw

Google News Digest: New Publishing Platform, Smart Campaigns, New G Suite Pricing and More http://bit.ly/2G1JJZ5

With the new year now well underway, Google is rolling out a fresh stream of updates for search users, advertisers, and website owners. Its more notable updates include a new publishing platform for local news (“Newspack“), a new pricing strategy for G-Suite Basic and Business Editions, and the integration of AdWords Express campaigns into the Google Ads platform. SEO via SEMrush http://bit.ly/1K8Zzbp January 30, 2019 at 03:20AM |

Categories

All

Archives

November 2020

|

RSS Feed

RSS Feed