|

http://img.youtube.com/vi/0Q7kJZdZlUM/0.jpg

Search Buzz Video Recap: Google Algorithm Updates, Info Command Gone, Bing Updates, Google Ads, New UIs & More https://ift.tt/2THka1B  This week in search, we saw a few more algorithm shifts with the Google search results, one around March 26th and one around March 20th. Google dropped the info command, removing the ability to see the Google selected canonical for any URL but now you can see that information for your verified properties in Google Search Console’s URL inspection tool. I published the survey results for the Google March 2019 core update this week. Bing announced they improved their intelligent answers, text to speech and visual search capabilities. Google AMP and mobile friendly testing tools now allows for code editing in the tool. Google’s messaging around the rel=next/prev is that nothing has changed, but it has. Gary Illyes was the Googler who spotted it changed. Google said accents in URLs work fine. Google said you can redirect lower quality pages to higher quality pages. Google published a video on SEO for Angular sites. The old Search Console has only a handful of features remaining. Google said you should slowly remove other properties once you do the domain property verification in Search Console. Google said Google Partners do not get preferential treatment in search. Google is testing a new search bar with icons in them. Google Image search is testing a slide in article feature. Google launched the new Google Ads Editor to replace AdWords Editor. Google Ads Keyword Planner has some new features. Matt Cutts still leaves SEO spam traps. Ahrefs wants to compete with Google on search. That was this past week in search at the Search Engine Roundtable. Make sure to subscribe to our video feed or subscribe directly on iTunes to be notified of these updates and download the video in the background. Here is the YouTube version of the feed: VIDEO For the original iTunes version, click here. Search Topics of Discussion: Please do subscribe via iTunes or on your favorite RSS reader. Don't forget to comment below with the right answer and good luck! SEO via Search Engine Roundtable https://ift.tt/1sYxUD0 March 29, 2019 at 07:57AM

0 Comments

https://ift.tt/2FIozxs

I’m an Ad & You Can, Too! by @beanstalkim https://ift.tt/2WvRiv6 I got up from the hotel desk that morning in August of 2006, the WiFi was thankfully working and I’d had a chance to answer my emails. I’d sat not at a laptop, but at a full PC which I’d packed up, CTR monitor and all, and taken on the flight to the conference for reasons I will get to shortly. I was hungry and looking forward to the breakfast provided, and so I exited my hotel room. It was a sweltering 95-degree day in San Jose as I marched toward the McEnery Convention Center. The sun beat down on me in my suit from a clear blue sky for the full 28-minute, 1.3-mile walk, and I’d have to do the same that night. Thankfully it would be cooler. It was my last day at the conference though not the last day it was taking place. I’d had to cut my trip short but was looking forward to the sessions ahead and an evening out. I’d spoken the day before on optimizing for all three major engines. They were Google, MSN, and Yahoo! if you’re wondering. It was my first time speaking at a conference, and now I could relax.  I settled in, enjoyed the day, and when lunch rolled around it was delicious and boxed. I took two. That evening we went to the hotel bar. I nursed a beer and when the opportunity arose, I pulled $20 from my wallet and bought my friend and now Webmaster Radio co-host Jim Hedger a Crown and Coke. He’d given me some valuable advice on surviving my first session and supported me throughout. It was the least I could do, and I’d been waiting to do it. Waiting, that is, because I’d held on to that $20 for the duration of my time in San Jose. Not because it was a special bill, but because it was the last that I had. And that’s my point. I Was an AdI was an ad. A manufactured version of the person I wanted others to see. The reason I’d brought my computer with me is that I couldn’t afford a laptop. So instead I packed up the computer from “my office”, a beat-up desk at the foot of my bed, and brought it on the plane with me because I needed to keep up with clients. I walked for 28 minutes through miserable heat in wool suits because the HoJo’s was a couple hundred dollars cheaper than anything around the conference. I couldn’t spend the money I didn’t have on a cab, and so I walked, immediately ducking into the men’s room on my arrival at the conference to let my body cool off so I wasn’t sweating when I ran into people. I took two lunches because it would save me having to buy food later. I was almost found out when I was invited to dinner one night with a few folks. I made up a client emergency and then stayed out of sight for a while. There was no way I was making an extra trip to my hotel. I’d brought $50 on that trip and it had to last me the full conference. A conference I cut a day short so as not to have to spend anything extra on a cheap hotel because I had nothing extra to spend. I’d nursed that $50 right to the last evening, and needed to save some for the cab back to the airport. But I was able to treat my friend to a drink. I was able to play the part I was supposed to play as best I could play it. I was surrounded by success and I was not one of them. But I’d pulled it off. I’d looked like one. I was the ad I wanted to be. No one knew I was an imposter. That Was Not the Last Time…That was not the last time I played that role. In fact, I do it every day.  Yes, I have a laptop now. I sit at a desk I’m happy to sit at, no longer at the foot of my bed. When I travel, I stay in decent proximity to conferences and I’ll grab a Lyft to get to places more than a few minutes away. And I’ll probably buy myself some food. But even still, the version of me that is presented to the world is not the real me. And that’s important to remember because the version of those around me that I see, that I compare myself to, that version is very likely as manufactured as I am. This is not to say we are fake, it’s to say that back in 2006 I presented the version of me that I thought the world needed to see. I felt I needed to be an ad to earn the respect of my peers, and to hopefully land a client. This tendency has only been amplified by social media but certainly not created by it, and being in marketing, skilled in the art, we know exactly how to craft messages. Show just the right wins. Show just enough struggle. Be flawed in just the right way. Yes, I’m an AdAnd as I see the successes of the people around me and judge myself based on them, I try to remember that they’re all ads too. We are looking at manicured versions of each other, comparing ourselves to highlight reels that were created by marketers. And we’re trying to live up to them. I am not what I appear. In some ways, I may be better, but in a great many more I am certainly worse. And even now, in the example I have chosen to use to illustrate this point, the words I have chosen to convey it, and the way I am closing this piece, I am presenting the version of myself that I wish to be seen as. Remember this when you’re looking at the folks on a stage rocking it. When you’re hearing about how successful their companies are. How amazing they did on a campaign. How fantastic their dinner was (they made it themselves!). Remember, as you feel you haven’t accomplished what others have, I’m an ad. And you can, too! More Resources: Image Credits Picture From SES 2006: Barry Schwartz on Flickr SEO via Search Engine Journal https://ift.tt/1QNKwvh March 29, 2019 at 07:54AM

https://ift.tt/2FJpDBq

Google Tests Adding Icons Back To The Search Bar Filters https://ift.tt/2V2lSfB

Google is testing showing icons in the search bar filters next to the all, news, maps, images, shopping and other buttons. This takes them back to a user interface they had in 2011, which they shortly dropped a year or so later. Here is a screen shot from Joe Youngblood on Twitter of the design test, which I cannot replicate: Here is what I see: Joe is not the only one seeing this test, here are others on Twitter:

Do you see this as well? I tried numerous browsers and operating systems, I cannot replicate this. Forum discussion at Twitter. SEO via Search Engine Roundtable https://ift.tt/1sYxUD0 March 29, 2019 at 07:03AM Google Launches Google Ads Editor v.1 Replacing AdWords Editor https://ift.tt/2HN09VM

Google has launched the new Google Ads Editor to replace the AdWords Editor. This comes well after Google rebranded AdWords to Google Ads. With that, Google rebranded the AdWords Editor to Google Ads Editor and also added features. Google wrote "Today we're announcing Google Ads Editor, v1. It’s a new and improved version of the Editor that you’ve come to know and love over the past 13 years. We waited to formally announce the new Google Ads brand on an Editor release that incorporates a significant update that addresses long-standing requests from the community." Here is what is new: Full cross-account management: In the past, campaign managers could only make changes in the Editor UI for a single account at any given time. This meant you spent more time on the same tasks. For the first time, Editor now supports full, cross-account management. This means you’ll be able to use Editor seamlessly across your Google Ads accounts, all from a single window. For example, you’ll be able to easily add the same set of keywords across different accounts, update campaign settings across your entire book of business, or download relevant stats across any grouping of your accounts. Improved design and usability: We’ve also improved the interface to help you navigate features and execute tasks more quickly. For example, based off of UX research we saw that users had difficulty remembering where each setting was. So we created a right-hand Edit panel to improve your ability to scan. We also added search functionality so that you can quickly find what you need. Google Ads Editor v1 also helps you manage accounts at scale: Workflow

Bids & Budget Calls & Messaging Ginny Marvin covered this in detail at Search Engine Land so check that out. Forum discussion at Twitter. SEO via Search Engine Roundtable https://ift.tt/1sYxUD0 March 29, 2019 at 06:52AM

https://ift.tt/2TKp7XH

Google: Long Term Plan You Should Switch To Domain Properties & Drop Other Forms https://ift.tt/2I5xE5l

Google's John Mueller said on Twitter a good long term plan for those who have verified properties in Google Search Console is to drop the individual property verification methods and just go with the domain properties method, where everything is combined across most canonicals. For example, for this site, I have the http, https and the domain property all verified here in Google Search Console. Here is a screen shot:

What John is recommending is that in the long term, you may just want to have the domain property version in your account and remove the other ones.

Although, some detailed SEOs may want to keep all of them to do some more interesting analysis. Forum discussion at Twitter. SEO via Search Engine Roundtable https://ift.tt/1sYxUD0 March 29, 2019 at 06:37AM

https://ift.tt/2YykIdF

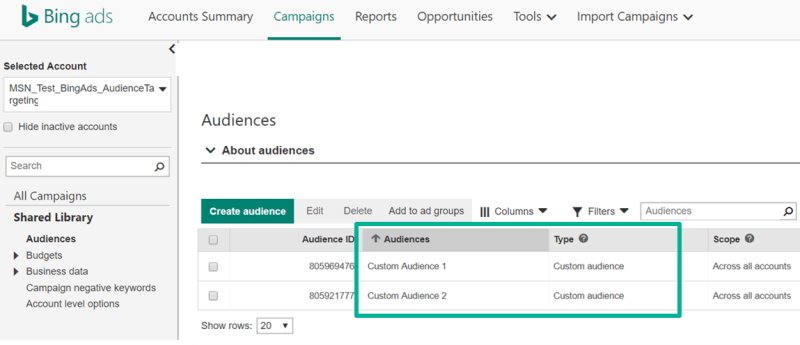

Bing Ads Custom Audiences now available in most markets https://ift.tt/2JLeiEX Bing Ads began piloting Custom Audiences nearly two years ago. Custom Audiences are now generally available in all Bing Ad markets with the exceptions of the E.U., Norway and Switzerland. Why you should careCustom Audiences allows you to remarket to your existing customers with messaging customized for each customer segment — loyalty members, high lifetime value customers, etc. Unlike other platforms (Google, Facebook) that allow advertisers to upload their customer lists of emails directly, Bing Ads has opted to partner with a handful of data management platforms (DMP). Adobe Audience Manager, LiveRamp and Oracle BlueKai are the three current partners. Once you enable the integration with one of the DMP partners, your Custom Audiences will appear in Audiences under Shared Library in Bing Ads. You can then associate custom audiences at the ad group level as target and bid, bid only or as an exclusion. Then set bid adjustments at the audience level. More on Custom Audiences

About The AuthorGinny Marvin is Third Door Media's Editor-in-Chief, managing day-to-day editorial operations across all of our publications. Ginny writes about paid online marketing topics including paid search, paid social, display and retargeting for Search Engine Land, Marketing Land and MarTech Today. With more than 15 years of marketing experience, she has held both in-house and agency management positions. She can be found on Twitter as @ginnymarvin. SEO via Search Engine Land https://ift.tt/1BDlNnc March 28, 2019 at 09:22AM

https://ift.tt/2UWknPX

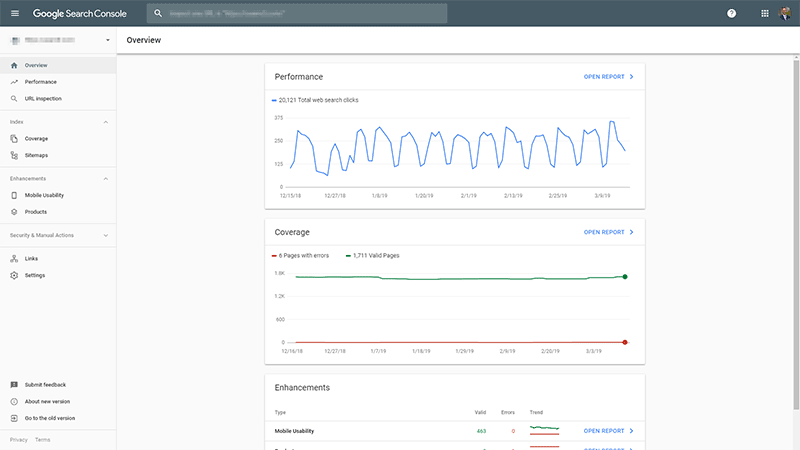

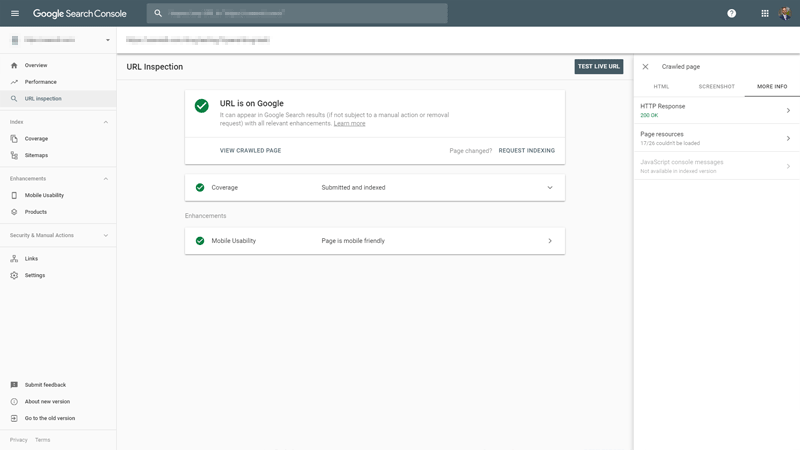

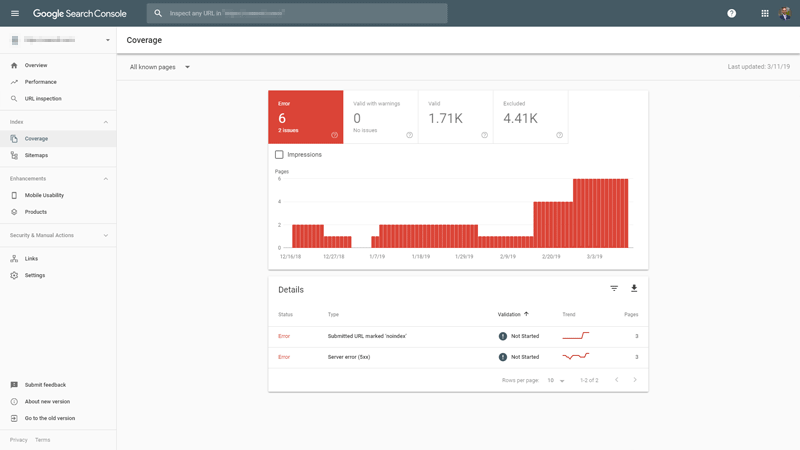

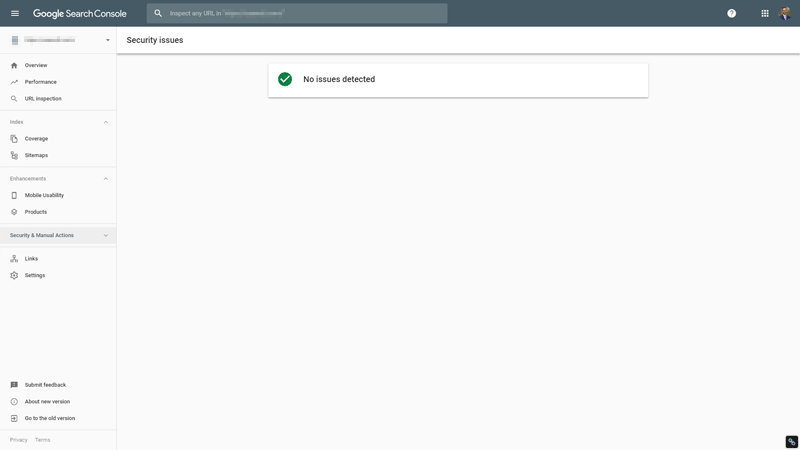

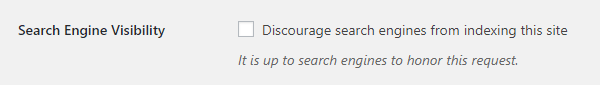

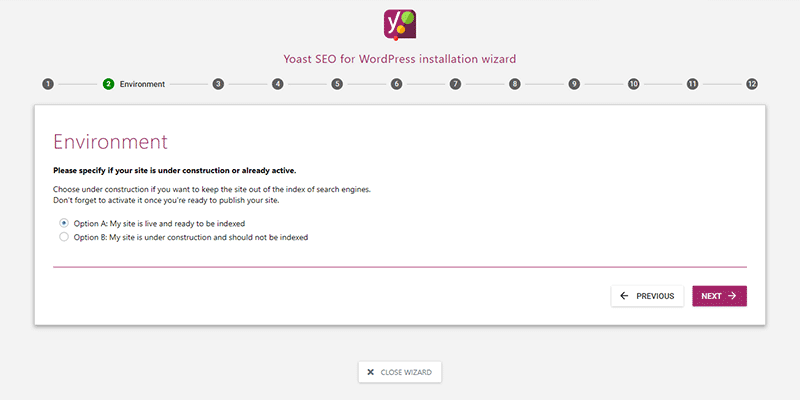

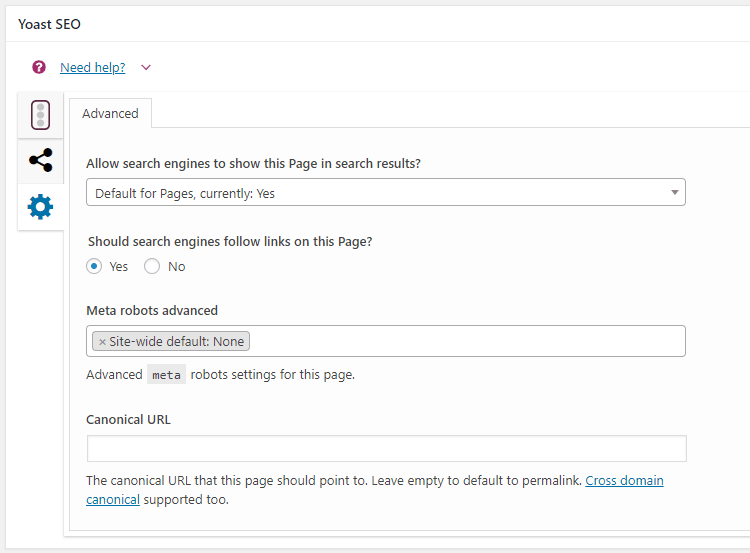

How to Analyze the Cause of a Ranking Crash by @jeremyknauff https://ift.tt/2UXewcZ In 2012, I had a large client that was ranked in the top position for most of the terms that mattered to them. And then, overnight, their ranking and traffic dropped like a rock. Have you ever experienced this? If so, you know exactly how it feels. The initial shock is followed by the nervous feeling in the pit of your stomach as you contemplated how it will affect your business or how you’ll explain it to your client. After that is the frenzied analysis to figure out what caused the drop and hopefully fix it before it causes too much damage. In this case, we didn’t need Sherlock Holmes to figure out what happened. My client had insisted that we do whatever it took to get them to rank quickly. The timeframe they wanted was not months, but weeks, in a fairly competitive niche. If you’ve been involved in SEO for a while, you probably already know what’s about to go down here. The only way to rank in the timeline they wanted was to use tactics that violated Google’s Webmaster Guidelines. While this was risky, it often worked. At least before Google released their Penguin update in April 2012. Despite my clearly explaining the risks, my client demanded that we do whatever it took. So that’s what we did. When their ranking predictably tanked, we weren’t surprised. Neither was my client. And they weren’t upset because we explained the rules and the consequences of breaking those rules. They weren’t happy that they had to start over, but the profits they reaped leading up to that more than made up for it. It was a classic risk vs. reward scenario. Unfortunately, most problems aren’t this easy to diagnose. Especially when you haven’t knowingly violated any of Google’s ever-growing list of rules In this article, you’ll learn some ways you can diagnose and analyze a drop in ranking and traffic. 1. Check Google Search ConsoleThis is the first place you should look. Google Search Console provides a wealth of information on a wide variety of issues. Google Search Console will send email notifications for many serious issues, including manual actions, crawl errors, and schema problems, to name just a few. And you can analyze a staggering amount of data to identify other less obvious but just as severe issues. Overview is a good section to start with because it lets you see the big picture, giving you some direction to get more granular.  If you want to analyze specific pages, URL Inspection is a great tool because it allows you to look at any pages through the beady little eyes of the Google bot. This can come in especially handy when problems on a page don’t cause an obvious issue on the front end but do cause issues for Google’s bots.  Coverage is great for identifying issues that can affect which pages are included in Google’s index, like server errors or pages that have been submitted but contain the noindex metatag.  Generally, if you receive a manual penalty, you’ll know exactly why. It’s most often the result of a violation of Google’s webmaster guidelines, such as buying links or creating spammy low-quality content. However, it can sometimes be as simple as an innocent mistake in configuring schema markup. You can check the Manual Actions section for this information. GSC will also send you an email notification of any manual penalties.  The Security section will identify any issues with viruses or malware on your website that Google knows about. It’s important to point out that just because there is no notice here, it doesn’t mean that there is no issue – it just means that Google isn’t aware of it yet.  2. Check for Noindex & Nofollow Meta Tags & Robots.Txt ErrorsThis issue is most common when moving a new website from a development environment to a live environment, but it can happen anytime. It’s not uncommon for someone to click the wrong setting in WordPress or a plugin, causing one or more pages to be deindexed. Review the Search Engine Visibility setting in WordPress at the bottom of the Reading section. You’ll need to make sure it’s left unchecked.  Review the index setting for any SEO-related plugins you have installed. (Yoast, in this example.) This is found in the installation wizard.  Review page-level settings related to indexation. (Yoast again, in this example.) This is typically found below the editing area of each page. You can prioritize the pages to review by starting with the ones that have lost ranking and traffic, but it’s important to review all pages to help ensure the problem doesn’t become worse.  It’s equally important to also check your robots.txt file to make sure it hasn’t been edited in a way that blocks search engine bots. A properly configured robots.txt file might look like this:

On the other hand, an improperly configured robots.txt file might look like this:

Google offers a handy tool in Google Search Console to check your robots.txt file for errors. 3. Determine If Your Website Has Been HackedWhen most people think of hacking, they likely imagine nefarious characters in search of juicy data they can use for identity theft. As a result, you might think you’re safe from hacking attempts because you don’t store that type of data. Unfortunately, that isn’t the case. Hackers are opportunists playing a numbers game, so your website is simply another vector from which they can exploit other vulnerable people – your visitors. By hacking your website, they may be able to spread their malware and/or viruses to exploit even more other computers, when they might find the type of data they’re looking for. But the impact of hacking doesn’t end there. We all know the importance of inbound links from other websites, and rather than doing the hard work to earn those links, some people will hack into and embed their links on other websites. Typically, they will take additional measures to hide these links by placing them in old posts or even by using CSS to disguise them. Even worse, a hacker may single your website out to be destroyed by deleting your content, or even worse, filling it with shady outbound links, garbage content, and even viruses and malware. This can cause search engines to completely remove a website from their index. Taking appropriate steps to secure your website is the first and most powerful action you can take. Most hackers are looking for easy targets, so if you force them to work harder, they will usually just move on to the next target. You should also ensure that you have automated systems in place to screen for viruses and malware. Most hosting companies offer this, and it is often included at no charge with professional-grade web hosting. Even then, it’s important to scan your website from time to time to review any outbound links. Screaming Frog makes it simple to do this, outputting the results as a CSV file that you can quickly browse to identify anything that looks out of place. If your ranking drop was related to being hacked, it should be obvious because even if you don’t identify it yourself, you will typically receive an email notification from Google Search Console. The first step is to immediately secure your website and clean up the damage. Once you are completely sure that everything has been resolved, you can submit a reconsideration request through Google Search Console. 4. Analyze Inbound LinksThis factor is pretty straightforward. If inbound links caused a drop in your ranking and traffic, it will generally come down to one of three issues. It’s either:

A manual action will result in a notification from Google Search Console. If this is your problem, it’s a simple matter of removing or disavowing the links and then submitting a reconsideration request. In most cases, doing so won’t immediately improve your ranking because the links had artificially boosted your ranking before your website was penalized. You will still need to build new, quality links that meet Google’s Webmaster Guidelines before you can expect to see any improvement. A devaluation simply means that Google now assigns less value to those particular links. This could be a broad algorithmic devaluation, as we see with footer links, or they could be devalued because of the actions of the website owners. For example, a website known to buy and/or sell links could be penalized, making the links from that site less valuable, or even worthless. An increase in links to one or more competitors’ websites makes them look more authoritative than your website in the eyes of Google. There’s really only one way to solve this, and that is to build more links to your website. The key is to ensure the links you build meet Google’s Webmaster Guidelines, otherwise, you risk eventually being penalized and starting over. 5. Analyze ContentGoogle’s algorithms are constantly changing. I remember a time when you could churn out a bunch of low-quality, 300-word pages and dominate the search results. Today, that generally won’t even get you on the first page in for moderately topics, where we typically see 1,000+ word pages holding the top positions. But it goes much deeper than that. You’ll need to evaluate what the competitors who now outrank you are doing differently with their content. Word count is only one factor, and on its own, doesn’t mean much. In fact, rather than focusing on word count, you should determine whether your content is comprehensive. In other words, does it more thoroughly answer all of the common questions someone may have on the topic compared to the content on competitors’ websites? Is yours well-written, original, and useful? Don’t answer this based on feelings – use one of the reading difficulty tests so that you’re working from quantifiable data. Yoast’s SEO plugin scores this automatically as you write and edit right within WordPress. SEMrrush offers a really cool plugin that does the same within Google Docs, but there are a number of other free tools available online. Is it structured for easy reading, with subheadings, lists, and images? People don’t generally read content online, but instead, they scan it. Breaking it up into manageable chunks makes it easier for visitors to scan, making them more likely to stick around long enough to find the info they’re looking for. This is something that takes a bit of grunt work to properly analyze. Tools like SEMrush are incredibly powerful and can provide a lot of insight on many of these factors, but there are some factors that still require a human touch. You need to consider the user intent. Are you making it easier for them to quickly find what they need? That should be your ultimate goal. More Resources: Image Credits Featured Image: Created by author, March 2019 SEO via Search Engine Journal https://ift.tt/1QNKwvh March 28, 2019 at 09:18AM

https://ift.tt/2Ww4K1R

The 4 Pillars of Enterprise SEO Success by @SEOGoddess https://ift.tt/2I1PA0F  Before starting my position at Groupon, I interviewed for director and senior manager positions in SEO with REI and Amazon. I noticed that there appears to be a shift in requirements for SEO positions. Companies are realizing that to be successful at SEO, doesn’t mean just being good at the technical stuff or at identifying opportunities for growth. Now, these companies know that they need people who can communicate to executives effectively as well as with a mix of teams (technical, creative, some that know SEO and some that have no clue), all in the same room at the same time. In addition, companies are looking for more deeper-level analytical and technical abilities with expectations of understanding SQL, large data sets, and issues that arise from dynamically built sites such as:

During my time as the SEO manager at Nordstrom, I identified the same pattern and restructured my team to fit within these requirements. Here are the four pillars of SEO within an enterprise organization. 1. SEO Mitigation: Error Management & Technical SEOI use the word “mitigation” as I have found that a good percentage of an SEO’s time in larger organizations is spent identifying issues after a project has been launched. For instance, having to go back to the engineering teams and request that bugs be filed to make the necessary corrections. If only the issues had been identified before the launch, then the company could have saved time, effort and money. The SEO responsible for mitigation works with the engineering teams as well as project and product managers during the indoctrination of a project and remains involved. Education is also key as those involved understand the nuances of SEO enough to either ask questions before making decisions or make the decisions themselves, saving the company the time and effort in the long term. 2. SEO Analysis/Reporting: Calculating Assumptions & Reporting on SuccessesEvery company needs to understand how much SEO plays a part in traffic and revenue. When it comes to reporting, there are complexities to SEO that other channels don’t have. Google does not provide referring keywords to a site from organic search like they do for paid search. Understanding that organic traffic from Google is x percent of all search traffic and result in $x revenue, pulling clicks from Google Search Console from specific keywords, and then calculating the percentage of all clicks to get the estimated revenue for that keyword will allow companies to have better insight into:

An SEO who can make these calculations and report on the performance to key stakeholders is an important part of the larger SEO piece. 3. SEO Project Management: Determining Growth & Managing Projects for SEOWhile making corrections and reporting on the successes of the work on SEO is important, so is growth. Identifying upward trends in searches and gaps that might be present on current or past efforts for SEO is imperative to the success of a good enterprise SEO team. A project manager is tasked with initiatives identified on a larger scaled that impact a large portion of the website, including:

The project manager in SEO would focus all their time and energy getting teams to commit to delivery dates and keep it all organized throughout multiple teams. In the end, resulting in revenue growth from SEO. 4. Relationship Building: Championing SEO to Stakeholders & Other TeamsThe final piece to the SEO enterprise puzzle is the ability to build and engage in relationships across the organization. I usually recommend that the SEO team begin with the first three aforementioned, and follow through with the relationship building team member later. The alternative is to encourage the relationship building from the SEO team’s manager or director, or instill this into each team member as they engage with others in the organization. Build relationships in engineering, creative, legal and public relations, among others. SEO touches every aspect of the organization and will, at some point, require support from one or more of these teams. Having a good solid relationship with the members of those teams will get buy-in for SEO initiatives faster with more efficiency, ultimately leading to the overall growth of SEO and the company at large. While interviewing at REI, we spoke of several positions opening up on different teams within the organization. The interesting part about these positions is that rather than placing SEO in marketing with the paid search, social media and email teams, these positions were as program managers. The roles are defined by the core strengths every enterprise SEO should have. These include:

In a sense, these roles were covering the four pillars in one role as an individual contributor. As the person in the role becomes successful, teams will then be built out to support each strength. In the end, this will develop a strong team and presence for SEO that would drive the success of the business. Ultimately, I decided to accept a role at Groupon. The company has a strong handle on SEO, covering each aspect that is required in clearly defined roles. This is what works for Groupon and allows the team to develop roles around the four pillars. It seems that some companies have SEO roles and teams that are moving away from marketing and splitting up to SME roles, or IC roles sitting on engineering, content/creative, reporting, and marketing then coming together to communicate from time to time. Lastly, being able to report revenue, prioritize projects and communicate that up through the chain. This is all such a big shift in the recent years of how corporate is structuring and visualizing for SEO which is beneficial for the company and the industry as a whole. More Resources: SEO via Search Engine Journal https://ift.tt/1QNKwvh March 28, 2019 at 08:25AM

https://ift.tt/2Yvtaus

Ahrefs To Compete With Google Search & Share The Wealth With Publishers https://ift.tt/2OxAC3x

Yesterday, Dmitry Gerasimenko the CEO of Ahrefs - a beloved SEO toolset provider, announced on Twitter his company will be building a search engine to compete with Google. He knows it sounds crazy, he said that, but he said he wants to do it for two reasons. (1) The DuckDuckGo reason, to provide a search engine that values your privacy and doesn't use private data to benefit them. (2) To share 90% of the profit they make directly with the publishers whose content they show and use in their index. Now that sounds cool!

When I wrote about this at Search Engine Land yesterday, I was thinking, wait April Fools Day is right around the corner. But it is too far away to be an April Fools joke, right? So I posted a poll on Twitter asking if you think Ahrefs can do it? I mean, they have a lot of the tech already. They already crawl the web, index content, discover links, evaluate those links. But they are missing a lot of the tech also - including ranking factors, machine learning, etc. But Dmitry seems earnest about building it out and building it out the right way. Can they compete? I mean, we saw lots of companies both large and small, well funded and bootstrapped, all try. The only one coming close is Bing, backed by Microsoft, and heck, they are still miles and miles away from being a serious competitor. IAC's Ask.com failed. Yahoo failed. You can see the full list here. DuckDuckGo is trying but not even close to Bing. Is there room for another player? Can Ahrefs really compete? Take my poll on Twitter - you may have to click through:

Forum discussion at Twitter. SEO via Search Engine Roundtable https://ift.tt/1sYxUD0 March 28, 2019 at 07:34AM Google: It Is Okay To Redirect Lower Quality Content Pages To Better Pages https://ift.tt/2YzZs7k

Google's John Mueller said in a video hangout the other day at the 50 minute mark that redirecting a low quality page to a higher quality page won't hurt the higher quality page. Google will evaluate the content on the final page, the ultimate page, and not evaluate the content from the redirected page. It is a short blurb in the video, so here it is when he starts talking about it: VIDEO Here is how Glenn summed it up:

Here is the transcript: If a story has been redirected because it was thin content and many years old, do the negative effects of the article kind of get forwarded on with the redirection? Forum discussion at Twitter. SEO via Search Engine Roundtable https://ift.tt/1sYxUD0 March 28, 2019 at 07:21AM |

Categories

All

Archives

November 2020

|

RSS Feed

RSS Feed